The agent is not the user. Stop building UIs that pretend it is.

Walk into any product review for an agent-driven workflow product in mid-2025 and the same screen is on the projector.

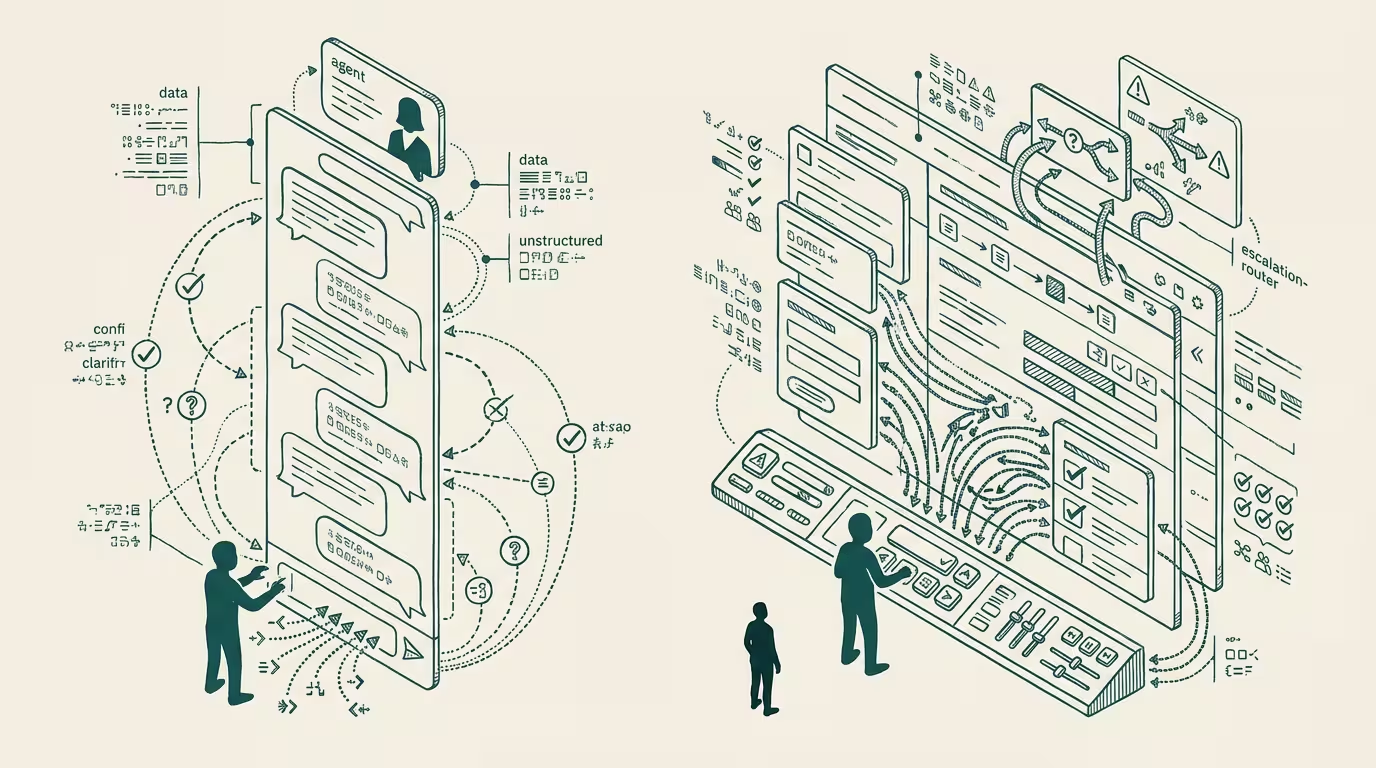

A chat panel down the right third of the application. The operator types a request. The agent responds with a paragraph explaining what it is going to do. The operator confirms, or edits, or asks a clarifying question. The agent writes another paragraph. Eventually some action happens in the rest of the UI. The product manager points at the chat panel as evidence of the AI integration. The engineering lead points at the chat panel as evidence of the agent's reasoning being legible. Investors see the chat panel and assume the product is on the right side of the foundation-model wave.

Nobody in the room asks the only question that matters, which is whether the chat panel should be there at all.

It should not. The chat panel is residue. It is the residue of a category error that ran through the first eighteen months of the post-ChatGPT product cycle, and the industry has not yet corrected for it. The error is the assumption that because the foundation-model interface most users encountered first was a chat interface, the right interface for any product that uses a foundation model is also a chat interface. That assumption is wrong. It is wrong in a way that gets more wrong the more agentic the underlying workflow gets.

The chat UI is a reasonable interface for a single-shot question-and-answer use case where the human is genuinely the consumer of the model's output. It is the wrong interface for the use case where a human operator is using a model to do work in a system, because in that use case the human is not the consumer of the model's output. The system is. The human is the operator who chose the work, monitors the work, and intervenes when the work goes off the rails. The operator and the consumer are not the same role. The interface that serves the consumer pessimizes the operator.

The diagnostic is simple. If your operator is reading the chat to know what the agent did, you have shipped the consumer interface to a user who needs the operator interface. The right surface for the operator is the work itself: the rows that got updated, the records that got created, the file that got modified, the diff against the prior state, the log of what the agent attempted and what it succeeded at and what it failed at and where it stopped to wait. The chat is at best the audit trail. At worst it is the noise that distracts the operator from inspecting the audit trail. Treating the chat as the primary surface trains the operator to read prose summaries instead of inspecting state, which is the opposite of what an operator-grade interface should be training the operator to do.

The deeper problem is that the chat UI implicitly models the agent as a peer in the conversation. Two parties, one channel, alternating turns. That is the right model for two humans, or for a human and a customer-service bot, or for a human and a question-answering assistant. It is the wrong model for an agent that is doing work the human delegated.

The right model for that case is closer to the model an executive has of a delegated task. A task got assigned. The task is in some state. The state is observable. The observation does not require the executive and the assignee to write paragraphs at each other. The task has a status. The status has a history. The history has artifacts. The artifacts are inspectable. The whole interaction is asymmetric. The operator initiated the work, the agent is doing the work, and the legibility of the work flows from the work itself, not from a transcript of the agent narrating the work.

The chat UI also gets the asynchrony wrong. A real agent-driven workflow runs at a different cadence than a real human conversation. Some sub-tasks complete in two hundred milliseconds. Some take twelve minutes because they are waiting for a third-party API to respond. Some take six hours because they are batched for an overnight job. A chat UI rendered around the assumption of turn-taking does not handle any of these well. The two-hundred-millisecond response feels glib. The twelve-minute response sits in a "thinking..." state that gives the operator no useful information about what is actually happening. The six-hour response is impossible to model in a chat at all, which is why every product that hits a real agent-driven workflow eventually bolts a notification system, an inbox view, or a dashboard onto the side of the chat. Those bolt-ons are the actual interface. The chat is the legacy ornament.

The defense of the chat usually arrives in one form. The operator needs to communicate with the agent, and natural language is the right medium for that communication. The first half of the claim is true. The second half is not.

Natural language is the right medium for one specific kind of communication: the underspecified initial request, where the operator does not know precisely what they want and the agent has to elicit the specifics. Once the request has been refined into something the agent can act on, the rest of the communication is about state, not language. The operator wants to know what the agent did, what it has not done yet, what it is currently doing, and what has gone wrong. None of those are best expressed as prose. They are best expressed as structured data: a task list, a progress indicator, a status badge, a diff, a notification, a row that changed color when its state changed. The chat panel is a vestigial hold-over of the natural-language-as-input phase, applied to the natural-language-as-output phase where it does not belong.

The honest version of an operator-grade agent UI looks much more like a project-management tool than a messaging app. A queue of tasks. A view of in-flight work, with what each in-flight item is currently waiting on. A history of completed work, browsable and searchable. An audit trail per task, which is the right place for the natural-language reasoning of the agent to live, accessible when the operator wants to inspect it and out of the way when the operator does not. A way to inject new instructions into in-flight work, which is the place where natural language genuinely earns its place again.

The interface looks less like a chatbot and more like the dashboard of a tool that was already good for delegating work to a team of humans, except the team is now an agent and the dashboard has to be more legible than the human-team version was, because the failure modes of the agent are stranger and the operator has to be able to spot them.

Two practical corollaries follow from naming the antipattern.

The first: any product manager whose AI integration screen is dominated by a chat panel should treat that as evidence the product has not yet been redesigned for the operator, only retrofitted for the model. The retrofit is the easy ship. The redesign is the harder ship, and it is the one that determines whether the product is durable.

The second: any operator who finds themselves writing long prompts back and forth with an agent inside a chat panel should treat that as the system telling them the interface is failing them. The right move at that point is not to write better prompts. It is to push the workflow into a structured form that the agent can act on without the operator narrating, and to demand of the product the structured surfaces an operator-tier UI requires.

The chat panel will keep shipping for another year or two. The design pattern is established. The engineering cost of replicating it is low. It demos well to investors who have not yet seen the right alternative. None of those are reasons to keep building it.

The agent is not the user. The operator is. Build for the operator.

—TJ