Why the 'AI killed the search engine' framing is premature.

Every six to nine months for the last twenty years somebody has run the “AI killed the search engine” headline. The 2004 version was about Google’s answer-extraction; the 2009 version was about Wolfram Alpha; the 2011 version was about Siri; the 2016 version was about voice assistants and the ambient-computing thesis; the 2020 version was about GPT-3-shaped few-shot retrieval; the 2023 version was about ChatGPT and Bing; the early-2024 version is about Perplexity, Arc Search, and the latest crop of AI-native consumer-search products. Each version carries the same structural claim: the ten-blue-links interface is obsolete and the synthesizing-AI interface is the replacement. Each version is, by the next product cycle, still wrong about the timeline. Some of the takes will eventually be right. The interesting question is why they keep being premature, and the interesting answer is not the one the takes themselves keep reaching for.

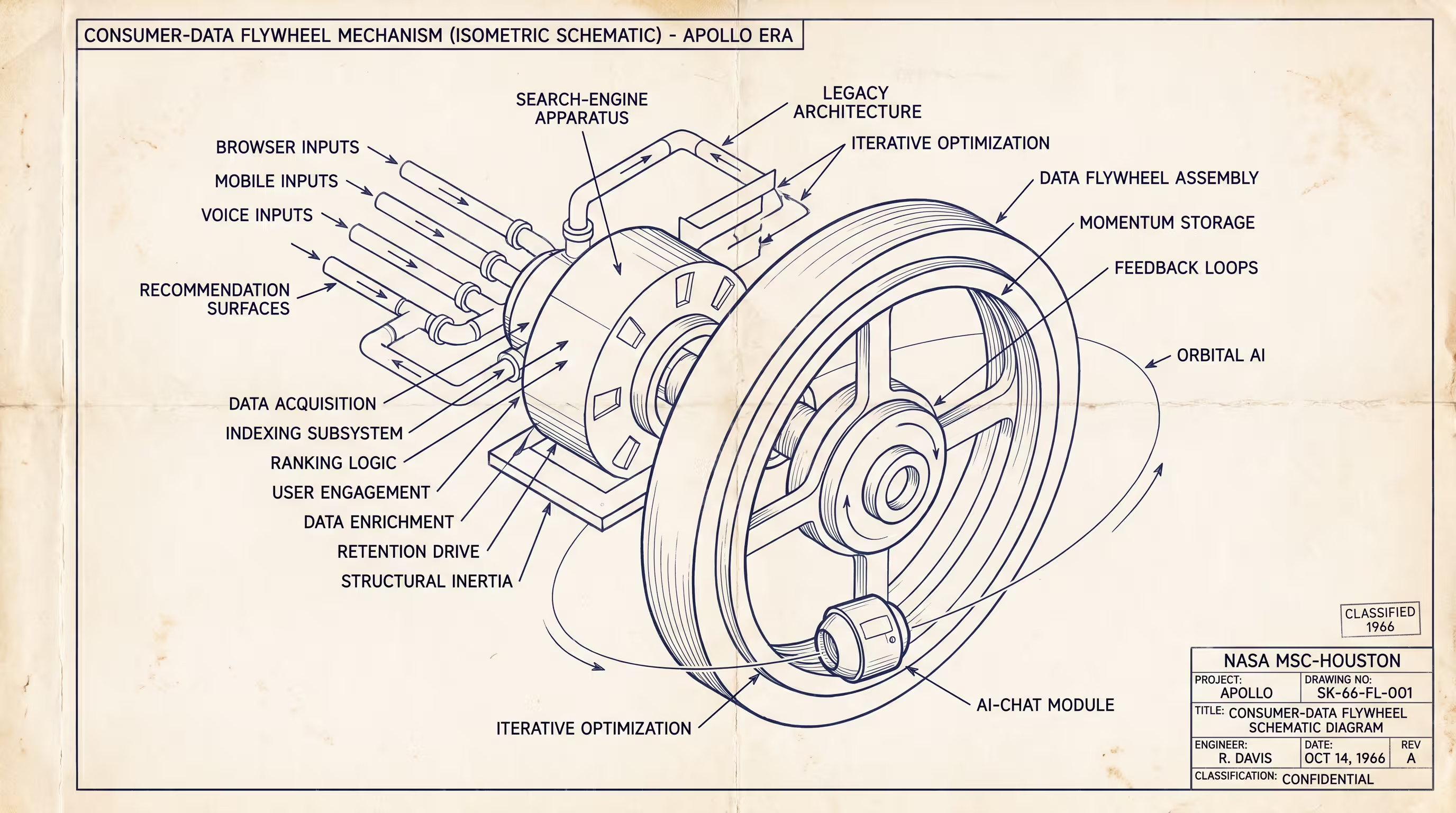

The framing the takes keep using is product-quality: the AI synthesis is now good enough that users will prefer it to the link list. This framing has been directionally true for two years. ChatGPT does in fact produce a better answer to most factual questions than the Google SERP did in 2022. Perplexity does in fact produce a more useful research synthesis on a complex question than ten clicks through Wikipedia and a StackExchange thread. The product-quality argument is not wrong. It is also not the binding constraint. The binding constraint, the one that makes the AI-killed-search framing keep being premature, is the consumer-data flywheel that Google has been building for twenty-five years and that the AI-native consumer-search products have not, yet, replicated.

Search at the scale Google operates is not a model problem. It is a behavioral-data problem. Every query Google has ever served, every click that followed, every dwell-time signal, every refinement query within the same session, every cross-session-same-user pattern, every device-and-location-and-time-of-day correlation with intent, has been compounding into the underlying ranking model for two and a half decades. The consumer-search competitor that ships in 2024 has, on the day of launch, zero of that data. The launch product can be better at the abstract question of synthesis, and the launch product can be much better at the specific narrow surface of factual lookup, and the launch product can still be uncompetitive on the long-tail-personalization-and-intent-modeling work that Google’s data accretion solves. The behavioral flywheel is the moat, not the model.

This is the structural reason the AI-killed-search takes keep being premature, and it is also the structural reason the eventual disruption, when it happens, will not look like the takes describe. The disruption does not happen when the AI synthesis is good enough. The disruption happens when some new entrant accumulates equivalent or comparable behavioral data on a new substrate that does not look like the Google-search-bar substrate. Possible substrates are the chat interface (OpenAI is on a path to accumulate chat-behavioral data at meaningful scale and may, by 2026 or 2027, have a flywheel of its own), the agentic-tool-use surface (every API call an agent makes on behalf of a user generates the same kind of behavioral signal), the device-OS-level surface (Apple and Google both have years of OS-level interaction data that the AI-native search startups do not), and the social-graph-on-top-of-content surface (TikTok and ByteDance have been building this kind of flywheel and could pivot toward search). None of those substrates looks, on its surface, like search. All of them are the actual fight.

I want to be specific about the timing. The AI-killed- search takes keep being premature for the next two-to- four years because the data flywheels on the new substrates are still accumulating and have not yet reached the Google-equivalent scale. They will, inevitably, reach that scale on at least one of the substrates by some point in the late 2020s. When they do, the disruption will arrive and will be steeper than the prior generation of takes assumed, because the accumulated investment Google has made in the ten-blue-links surface is largely non-portable to the new surface. Google’s twenty-five years of search data does not directly help its agentic-search product, because the queries an agent issues do not look like the queries a human types into a search bar. The data is the moat for the old surface; it is not the moat for the new surface. So the disruption, when it lands, lands harder than the incumbent expects. And Google, which has correctly weathered the prior six rounds of AI-killed-search takes, may not weather the seventh.

The operator-level read for anyone building in this space is that the question to ask is not “is the AI synthesis better than the SERP?” The question to ask is “what is the new behavioral substrate my product accumulates data on, and is the accumulation rate fast enough to reach Google-scale flywheel competitiveness before the funding window closes?” Most of the AI-native consumer-search startups in early 2024 are not asking this question. They are competing on synthesis quality against a product (Google’s SERP) that they are correctly identifying as deteriorating. They are not building the substrate-side data flywheel that would actually displace Google, because the substrate-side flywheel is a longer-cycle, capital-deeper, less-immediate-product- win game. The startups that win the long run will be the ones whose product-shape is not directly comparable to Google’s ten-blue-links surface, because the non-comparability is the thing that lets the new data moat accrete.

The two startups in 2024 that are most plausibly building this kind of moat are OpenAI (whose chat interface is, whether or not they intend it to be, the accumulation surface for the next ten-year flywheel) and Apple (whose on-device OS-level interaction data is, whether or not they intend to monetize it as search, the substrate for an agentic-search product that no AI-native startup can compete with). Perplexity and Arc Search are doing real product work and may build durable businesses, but they are competing against Google on the wrong axis. The axis where Google can be displaced is not synthesis quality. The axis is the substrate-side data flywheel that makes the next interface paradigm work, and that is not primarily a startup game in 2024. It is a game between OpenAI, Apple, and possibly ByteDance, with Microsoft and Amazon as the other plausible entrants.

The closing point I want to land is the meta-pattern. The AI-killed-search takes keep being premature because they keep mistaking the visible product surface (synthesis quality) for the binding constraint (data flywheel on a new substrate). This is the same mistake the “X killed Y” takes have been making for thirty years across every consumer-tech category. Mobile killed desktop, except it didn’t, not on the timeline the takes assumed; voice killed text, except it didn’t; VR killed flat screens, except it didn’t; AI killed search will, in the same shape, eventually be true and will, on the way there, generate a steady stream of premature obituaries. The shape of the eventual transition will be the substrate-side flywheel, not the front-end synthesis quality. The framing the takes keep reaching for is the wrong framing. The framing the actual disruption will use is the one nobody is yet writing about because it does not, today, look like the thing it will replace.

—TJ