AI radiology is real. AI-in-radiology-as-product is mostly not. The FDA data explains the gap.

The U.S. FDA maintains a public list of approved AI-and-machine-learning-enabled medical devices. The list passed 700 entries by late 2024, with the substantial majority concentrated in radiology and adjacent imaging-class applications. The trade-press read of this number is that AI in radiology has shipped at scale and is transforming the practice. Both halves of the read are partially true and substantially misleading.

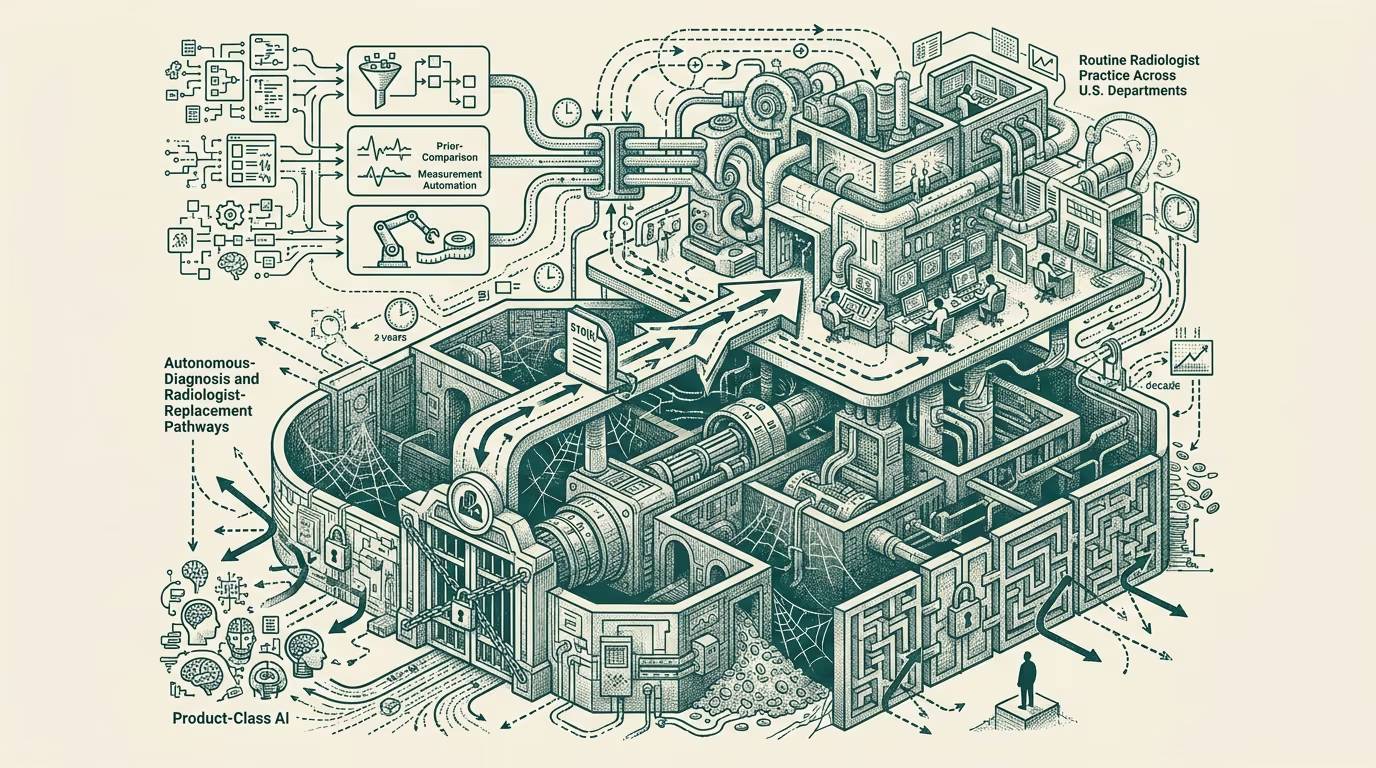

What the approval data actually shows is two structurally different things happening underneath the headline count. AI as a tool that helps the radiologist (workflow triage, prior-comparison surfacing, second-read augmentation, measurement-and-segmentation automation) shipped widely and is in routine clinical use across U.S. radiology departments. AI as a product that replaces the radiologist or operates autonomously without radiologist oversight (the autonomous-diagnosis vision, the standalone-AI-radiology vision, the radiologist-replacement vision the venture-class has been funding for a decade) did not ship at scale despite the visible pitch-deck activity.

This explainer walks the approval landscape, what tool-class AI is actually deployed, what product-class AI did not ship, and the structural reasons the gap exists and is likely to persist through the foreseeable horizon.

What the FDA approval data actually shows

The 700-plus approvals run heavily through the 510(k) pathway, which is the FDA's clearance mechanism for devices substantially equivalent to existing approved devices. The 510(k) pathway is appropriate for tool-class AI products: a workflow-triage tool that helps radiologists prioritize their reading list is substantially equivalent to existing workflow-triage tools, and the AI augmentation gets cleared on that basis. The De Novo pathway, which clears genuinely novel devices, has approved a much smaller number of AI-in-radiology products, with most of those still being augmentation tools rather than replacement products. The PMA pathway, which clears the highest-risk Class III devices, has cleared very few AI-in-radiology products because few have been submitted under that pathway.

The part that holds of the regulatory data is that the AI-in-radiology category is shipping through the regulatory pathway designed for incremental tool-class products, not through the pathway designed for novel high-risk replacement products. The vendors building the replacement-class products have largely not submitted them for the higher-bar regulatory clearance, and the products that have been submitted at the higher bar have largely not cleared. The regulatory read matches the deployment read: tools shipped, replacements did not.

What tool-class AI is actually deployed

Several categories of tool-class AI are in routine clinical use across U.S. radiology departments in 2024-2025. Workflow-triage tools (Aidoc, Viz.ai, Rapid AI, several others) prioritize the radiologist's reading queue based on suspected urgency from the AI's preliminary read, with the radiologist still reading every study and producing the diagnostic interpretation. Measurement-and-segmentation tools automate the manual measurement work the radiologist used to do, with the radiologist verifying the measurements before they enter the report. Prior-comparison tools surface relevant prior imaging studies and align them to the current study, saving the radiologist the manual prior-search work. Second-read tools provide a parallel AI interpretation that the radiologist can compare against their own reading, with the AI's flag serving as a quality-assurance check rather than a primary read.

These tools save radiologist time, reduce certain classes of human error, and produce measurable workflow improvements at the radiology departments that have deployed them. They do not replace the radiologist; they assist the radiologist. The clinical workflow still has the radiologist as the diagnostic-and-reporting authority for every study. The AI is a productivity-and-quality tool inside that workflow.

What product-class AI did not ship

The vision the venture-class has been funding for a decade is the AI radiologist: a system that reads imaging studies and produces final diagnostic reports without radiologist oversight, freeing the human radiologist for higher-complexity work or eliminating the radiologist role entirely for routine studies. This vision has not shipped in U.S. clinical practice in any meaningful volume.

A handful of standalone-AI products have been deployed in narrow scope (chest X-ray triage in some emergency-department settings, autonomous-screening for diabetic retinopathy in primary care) where the regulatory environment, the reimbursement structure, and the clinical-workflow integration aligned to support the deployment. These are exceptions, narrow in scope, and not the radiologist-replacement vision the broader product category has been promising.

The structural reasons the product-class vision did not ship are several and reinforcing. The regulatory-clearance bar for autonomous diagnosis is substantially higher than for tool-class augmentation, requiring the kind of clinical-trial evidence that most AI-in-radiology vendors have not generated. The reimbursement structure does not have a CPT-code path for AI-as-primary-reader at the volume that would make the unit economics work. The malpractice-and-liability exposure when an autonomous AI misreads a study is a problem that has not been resolved at the institutional level. The radiologist workforce has been politically and economically defended at the professional-society level, with the result that hospital-and-practice procurement is structurally cautious about products that frame themselves as radiologist-replacement.

The combined effect is that the autonomous-AI radiology product runs into four substantial structural barriers (regulatory, reimbursement, liability, professional) any one of which could block deployment, and all four of which would need to be solved for the vision to reach scale. The barriers are not loosening on the near horizon, and the vendors that have been pitching against the radiologist-replacement vision have largely not built the engineering or the commercial case to overcome any of the four.

What this leaves the operator class with

The part that holds on AI-in-radiology in 2024-2025 is that the tool-class category is real, deployed, growing, and economically meaningful. The product-class category is mostly pitch-deck, with narrow exceptions, and the four structural barriers are not going to dissolve quickly enough to validate the radiologist-replacement vision the venture-class has been funding.

For founders building in the AI-in-radiology category, the practical advice is to build for the tool-class category and price the round against the tool-class economics. Vendors pitching the radiologist-replacement vision against the four structural barriers are pitching against a deployment trajectory that is not going to materialize on the timeline the pitch deck implies. Vendors building tool-class products that genuinely save radiologist time, reduce error rates, or improve workflow throughput are building against a buyer who is ready to pay, a regulatory pathway that supports the clearance, and a deployment environment that is ready to absorb the product.

For investors evaluating the category, the part that holds suggests pricing the radiologist-replacement vision substantially below the pitch-deck framing, and pricing the tool-class augmentation substantially closer to existing healthcare-IT economics. The two halves of the AI-in-radiology category have different unit economics, different deployment timelines, different regulatory profiles, and different defensibility postures. Treating them as a single category produces wrong investment decisions.

The 700-plus FDA approvals are real. They are not, however, the radiologist-replacement vision the trade press sometimes implies they are. They are the tool-class category that is shipping. The product-class category is mostly elsewhere, and the structural barriers are real. The careful operator read separates the two and prices each one accordingly. The pitch-deck-driven read does not, and produces the wrong decisions.

—TJ