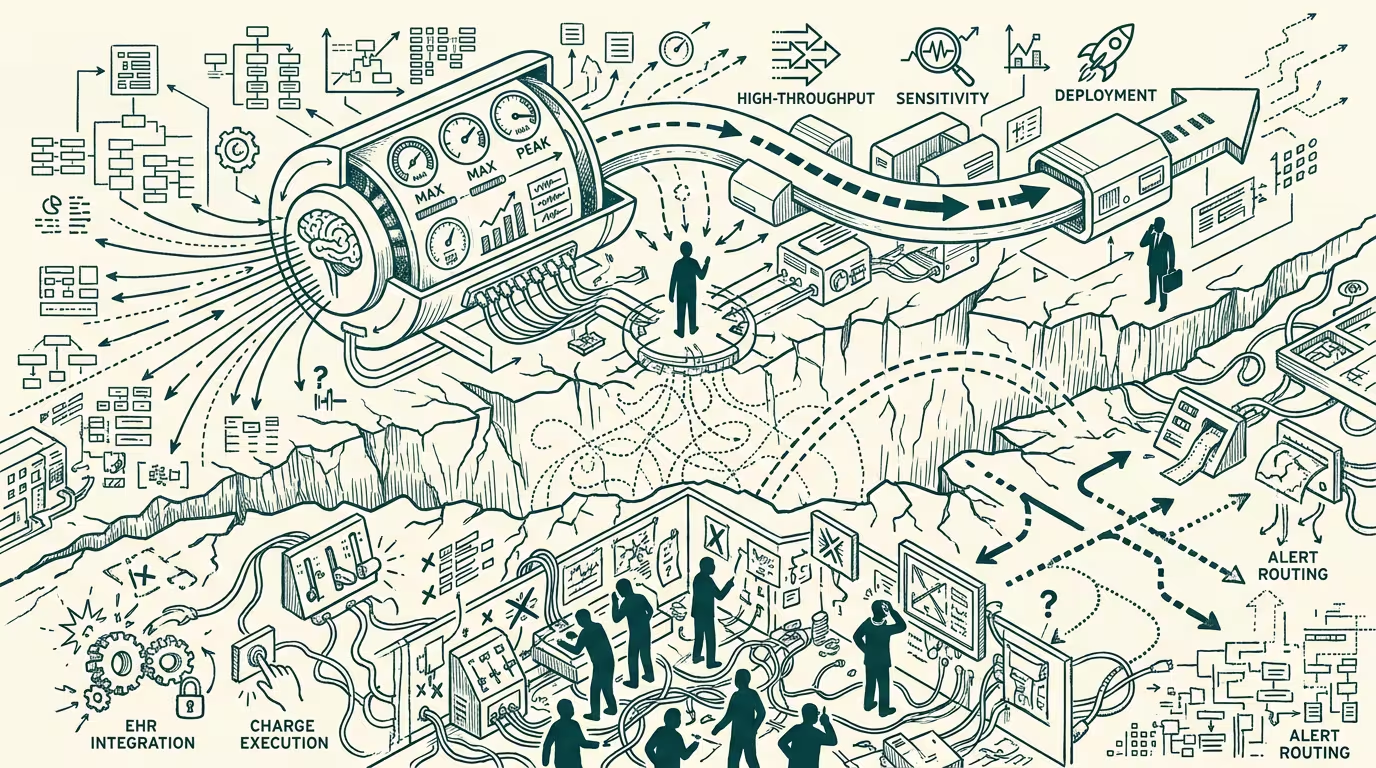

AI triage in the ED keeps not landing. The deployment gap is the reason.

The AI-triage-in-the-emergency-department pitch has been getting recycled in healthcare-AI fundraising decks since roughly 2018, and as of August 2024 it still has not landed in any deployment-tier vendor with a viable product. The trade press writes it up every eighteen months as if the breakthrough is imminent. The deck describes a clean-looking workflow where the AI ingests the chief complaint and the vital signs and the prior-encounter history, runs the triage decision in milliseconds, and outputs an ESI-equivalent acuity score that the triage nurse can override. The deck shows a 30% reduction in median door-to-room time. The deck shows a 12% reduction in misclassification rate. The deck cites a 2019 Stanford pilot.

The deployment never matches the deck. The structural reasons are worth naming because the same reasons apply to a long tail of adjacent AI-deployment categories.

The first reason is that the ED is not the test-bed the deck assumes. The deck assumes a clean stream of patient complaints, structured vital signs, and a triage nurse who is the bottleneck. The actual ED has none of those. The patient complaint is freeform, often translated, often partial, often delivered through a family member. The vital signs are taken on devices with measurement variance the AI vendor's training set did not account for. The triage nurse is not the bottleneck; the bottleneck is the room availability downstream of triage, and an AI that triages faster does not produce shorter total ED times because the patient still waits for a room. The pitch deck's "30% reduction in door-to-room" was measuring the wrong thing.

The second reason is the deployment-gap between the AI vendor's performance claim and the operating reality. The 12% misclassification reduction was measured retrospectively on a clean labeled dataset. In production, on the messy stream of actual ED triage encounters, the misclassification rate looks closer to baseline. The vendor knows this. The vendor's deck does not show it. The hospital that deploys the AI on the deck's claim discovers the gap in month three of pilot. The pilot does not get extended. The vendor moves on to the next prospect. The pattern recurs, with new vendors, on the same eighteen-month cycle.

The third reason is the failure-mode cost. The cohort that pays the cost of an ED-triage-AI failure is the patient who got under-triaged. That patient does not appear in the vendor's marketing deck. Their case appears, eventually, in a malpractice claim, in an OIG referral, in a state-AG investigation, or in the small fraction of cases that produce a published case study. The AI vendor's deck does not absorb that cost; the hospital's malpractice insurer does, and the hospital's insurer reads the cost and prices it into the next renewal cycle. The hospital, by year two of deployment, is paying more in malpractice premium than it saved in AI-vendor fees, and the deployment quietly winds down.

These three reasons compound. The wrong-test-bed problem produces performance numbers that are unreliable. The deployment-gap problem means even reliable performance numbers do not translate to operations. The failure-mode-cost problem means the operator is absorbing risk the vendor's pricing did not include. The category, structurally, has not produced a viable deployment because the structural conditions for one have not yet been met.

What would it take to produce a viable AI-triage-in-the-ED product?

Three things. First, training-and-validation data drawn from the actual operating environment of the ED, not from a clean retrospective dataset. That means longitudinal data collection across multiple sites, multiple shift patterns, multiple translation contexts. The data-collection effort is, in operator terms, the actual moat of the eventual winner.

Second, a deployment integration that solves the room-availability bottleneck rather than the triage-nurse bottleneck. The AI that improves operations is the AI that helps with patient throughput across the entire ED workflow, not just the triage step. That is a different product category from "AI triage."

Third, a contractual structure where the AI vendor absorbs some fraction of the malpractice-and-failure-mode cost. The vendor that does not absorb risk is the vendor that prices its product as a marketing-pilot rather than a production-deployment, and the operator absorbs all of the risk. A risk-sharing contract aligns the vendor and the operator on the operational outcome, and the vendor that signs that contract is the vendor that built a product that actually works.

None of those three are happening in 2024. They will, on the available evidence, take another five to seven years to converge. The category is recycling not because the technology is wrong but because the structural conditions for deployment have not been built. The operators who recognize this are the operators who do not waste 2024 budget on the next AI-triage pilot. The operators who do not recognize it are the operators paying the malpractice-renewal cost of the lesson.

The thing that crosses pillars is that the same structural-condition gap applies to a long list of adjacent AI-in-healthcare categories. AI-radiology-pre-read.AI-clinical-decision-support.AI-prior-authorization-automation. Each one has the same wrong-test-bed problem, the same deployment-gap problem, the same failure-mode-cost-asymmetry. The category that produces a viable deployment is the one where some operator commits to the longitudinal-data-collection investment, the workflow-integration investment, and the risk-sharing contract that aligns vendor and operator. Without those three, the category cycles through eighteen-month deck recycling for another decade. With those three, the category produces a winner inside three years. The right operator-class question for any healthcare-AI category in 2024 is which of the three structural conditions the candidate vendor is actually building toward, and the honest answer for most of them is none.

The cohort that bears the cost of the deployment gap is not in the vendor's deck. It rarely shows up in the trade press either. The cost is paid quietly, in claims-not-flagged-on-time and outcomes-that-could-have-been-better, and the deployment cycle reads, from the vendor's side, as a pilot that did not extend, and from the patient's side, as a system that did the same thing it always does.

—TJ