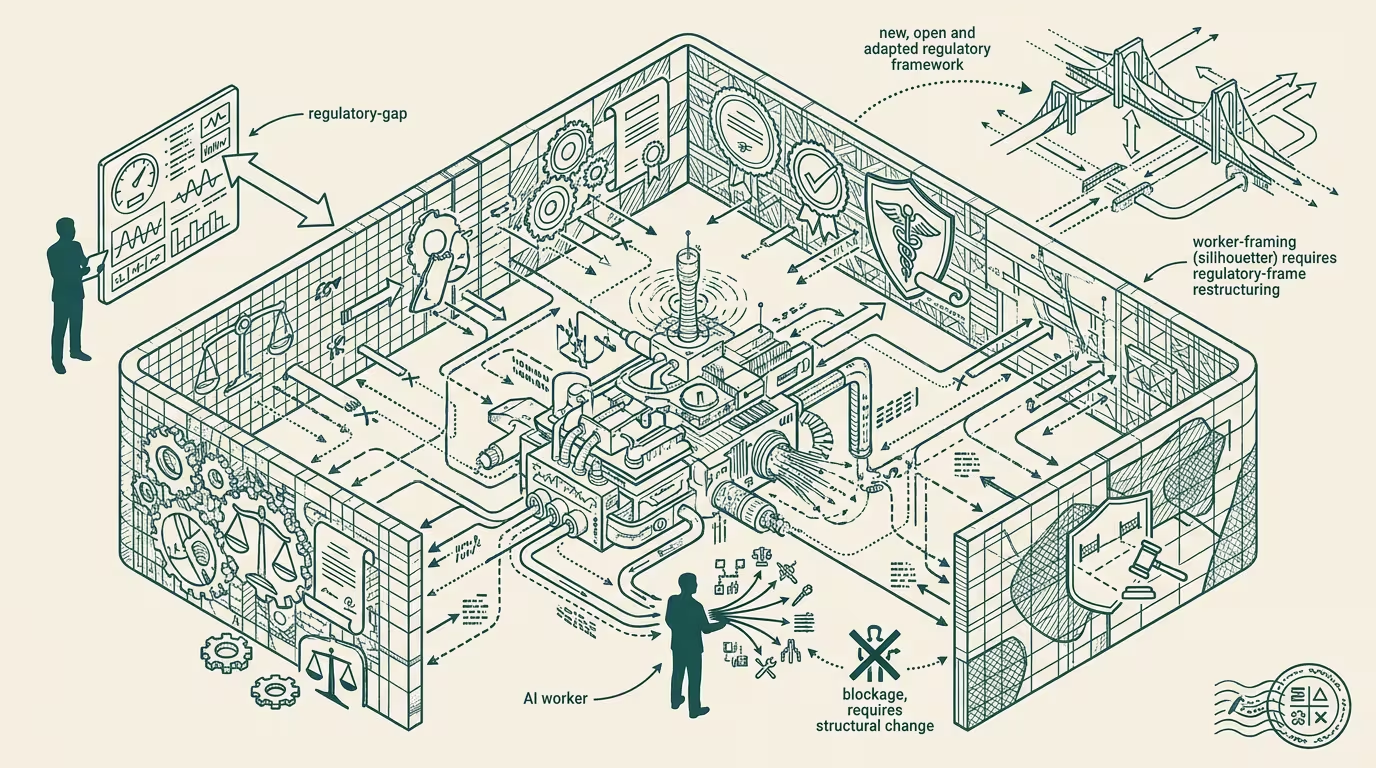

AI workers are unfortunately slowed down by law. At least in some domains.

Marc Andreessen and a16z's bio+health team published a series of pieces in early 2025 framing AI's entry into healthcare as "not as tools but as workers, full stack." The argument: the next wave of clinical-AI is not a tool that augments the clinician; it is a worker that replaces some fraction of clinical labor. The framing is provocative on purpose. The legal implications, which the a16z framing does not engage with at substance, are the load-bearing thing.

If AI is a worker, the regulatory frame that applies is employment law, professional-licensing law, and scope-of-practice law. None of those frames is currently designed to handle AI as the worker. All of them have to extend, and the extension is not going to happen on the AI-capability curve.

What it means for AI to be a worker, legally, in healthcare:

The clinician currently practices under a professional license issued by a state medical board. The license has scope-of-practice rules. It has continuing-education requirements. It has malpractice coverage tied to it. It has disciplinary procedures. It has a defined supervisor relationship for non-physician clinical staff (PAs, NPs, RNs). Every piece of that apparatus assumes the worker is a human.

If AI is the worker, every piece of that apparatus has to either extend or be replaced. The state medical board has to decide whether the AI gets licensed (under what criteria, who certifies, who renews). Scope-of-practice rules have to decide what AI workers can do that humans currently do (write prescriptions, order tests, perform diagnoses). Malpractice has to decide who carries the AI worker's coverage (the deployer, the vendor, a new entity). Discipline has to decide what happens when the AI worker makes a mistake (deactivation, retraining, vendor liability, customer remediation).

None of those decisions are simple. All of them take years.

The structural variable is which clinical domains move fast and which move slow.

Some domains move fast.Radiology is the canonical example. The AI radiology pre-read is, in 2025, deployed at scale in some health systems. The legal frame extended to handle it: the AI's pre-read is a non-binding draft that the licensed radiologist signs off on. The licensed radiologist remains the worker of record. The AI is, legally, a tool. The framing fits the existing scope-of-practice apparatus. Domains with similar shape (pathology, cardiology imaging, ophthalmology imaging) move at similar speed.

Some domains move slow on purpose.Primary care is the canonical example. An AI primary-care worker is, in operating terms, doing what a physician or NP does: taking a history, ordering tests, prescribing, managing chronic conditions, coordinating referrals. That work is heavily licensed, heavily regulated, and politically defended by the existing professional class. The state medical boards that would have to certify an AI primary-care worker are governed by physicians who, on operating-economics grounds, do not want to license a competitor. The political dynamic favors slow.

Some domains move slow on regulatory grounds. Mental health is the canonical example. The therapeutic relationship is, in legal frame, professional-conduct-protected on grounds the AI worker cannot reliably honor. The state licensing boards for psychologists, social workers, and licensed counselors are operating under regulatory frames that explicitly prohibit unlicensed practice. Extending the frame to cover AI workers is, in legal-realist terms, a multi-state regulatory project that takes a decade.

Some domains will see AI workers slip through because the regulatory frame did not anticipate them. Care navigation, discharge coordination, prior-auth-handling, patient-communications, clinical-documentation-and-codingall sit outside the licensed-clinical scope but are clearly clinical-adjacent. AI workers operating in those categories do not need a state license. They get deployed faster. The category compresses on the deployment-speed curve.

The read that survives is that the a16z framing of "AI as worker, full stack" is correct on a 10-year curve and wrong on a 2-3-year curve. Some domains will see AI workers operating at scale by 2027. Some domains will not see AI workers at scale until 2032-2035. The variation is not capability-driven. It is regulation-driven.

The structural read for healthtech founders building on the AI-as-worker thesis: pick the domain on the deployment-speed curve, not on the capability-curve. The AI worker that ships fastest is the AI worker in the unlicensed-clinical-adjacent category. The AI worker that has to wait for state-medical-board licensing is the AI worker that does not ship in the founder's investor-patience window. Operators who match their build to the regulatory horizon win the timing. Operators who build for the capability frontier wait for regulation that does not arrive on schedule.

The Andreessen framing is interesting and not wrong. The legal frame just isn't ready. Some domains it will get ready quickly. Some it won't. Some, of course, on purpose. AI workers are unfortunately slowed down by law. At least in some domains.

—TJ