Anthropic ships every two weeks now. Your eval window just got eaten.

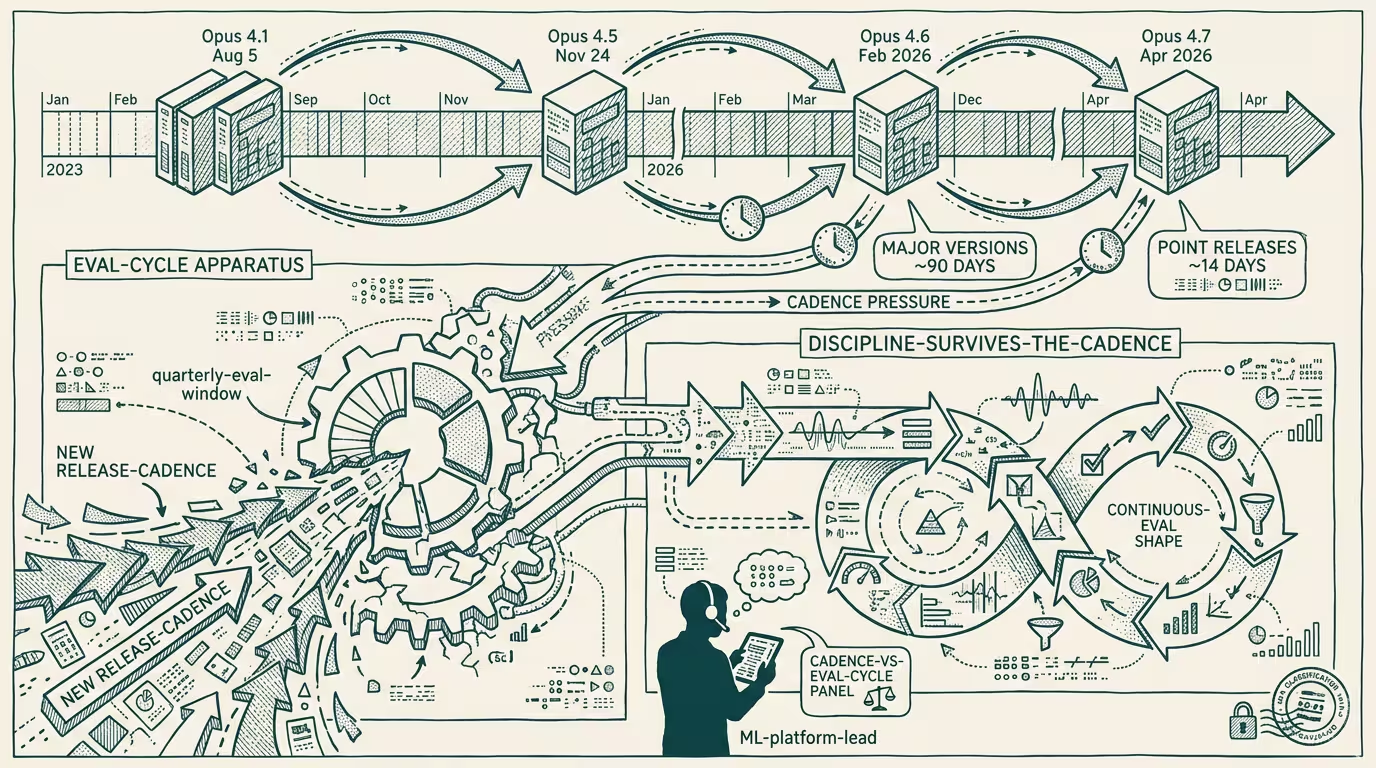

Anthropic shipped Claude Opus 4.1 on August 5, 2025; Opus 4.5 on November 24, 2025; Opus 4.6 in February 2026; Opus 4.7 in April 2026. The release cadence compressed to roughly 90 days for major versions, then to roughly 14 days for point releases. The trade-press read was "Anthropic is shipping fast." The durable read is that the quarterly eval cycle that worked through 2024 is, in 2026, a stale receipt before the eval is presented.

_The eval cycle is the variable. The release cadence is the constant._ Hold that frame in view. The discipline that survives the new cadence builds out from it.

The eval pattern that doesn't survive is the static-benchmark approach. The 2024 pattern was: pick a benchmark, run it on the new model, write up the delta, present to the team. The 2026 reality is that by the time the writeup is presented, two point releases have shipped and the benchmark numbers are stale. The replacement pattern is continuous behavioral monitoring on a fixed task suite that runs on every model version automatically, with deltas surfaced to the team at release-event cadence rather than at quarterly-meeting cadence. The work is mostly tooling: build the eval-runner pipeline once, instrument it against the API, let it ship reports on cadence. Operators without the tooling are running stale benchmarks. Operators with it are running calibrated monitoring.

The deck slide that doesn't survive is the model-version-named one. Pre-2025 the deck slide named the model the company built against. The slide had multi-quarter durability because the model had multi-quarter durability. Post-2025 the slide is operating-stale within ninety days. The replacement is naming the model family and the eval methodology rather than the version: "we benchmarked on Anthropic's Opus family using continuous behavioral monitoring on the [task-suite name]." That formulation survives version churn and signals process discipline. Operators still using the old slide are signaling absence of the process discipline.

The procurement contract that doesn't survive is the model-version-clauses one. The 2024 procurement contract specified a model version. The 2026 contract has to specify a model family with version-bump tolerance and a regression-test clause that triggers contract renegotiation if the family-level performance drops below a named threshold on a named task suite. That language is harder to write and binds tighter. Operators procuring on the 2024 language are paying for stale guarantees. Operators procuring on the 2026 language are paying for performance-guaranteed model access across the family-version churn.

The cadence compression itself is happening at every frontier lab, not just Anthropic. OpenAI's GPT-class release cadence is on the same compression curve. Google's Gemini cadence is on the same curve. Meta's Llama cadence on a slightly slower curve. Each lab's compression rate is different. The operator-class eval process has to absorb the compression across labs. The single-lab compression is hard. The multi-lab compression across procurement-relevant model families is harder.

What survives all of this is that the eval cycle that worked in 2024 is, in 2026, structurally inadequate; the three process changes named here are the floor for an operating-coherent eval process; and the eval process is, in operating practice, more important than the model selection because the model selection is going to change every ninety days regardless. By 2027 the eval-process discipline will be the standard for any operator working with frontier models. In 2026 it is a competitive advantage that a minority of operators have implemented.

Anthropic ships every two weeks now. The eval window is gone. The replacement is the process. Operators running the process are operating coherent. Operators running the 2024 quarterly cycle are presenting stale receipts to teams that already know the receipts are stale.

—TJ