What the biotech foundation models will look like.

The biotech foundation models arriving in late 2023 and through 2024 are the most underestimated thing in technology right now. That sentence will sound, to most readers, like a category confusion. The discourse around foundation models in 2024 is about chatbots and code completion and image generators. The biotech analogues are the same shape of object as those models, the same shape of training run, the same shape of scaling curve, except that they operate on the substrate from which every living thing is built. The discourse has not caught up to that fact. It will, and the gap between when it does and when the work was being done is going to look, in retrospect, embarrassing.

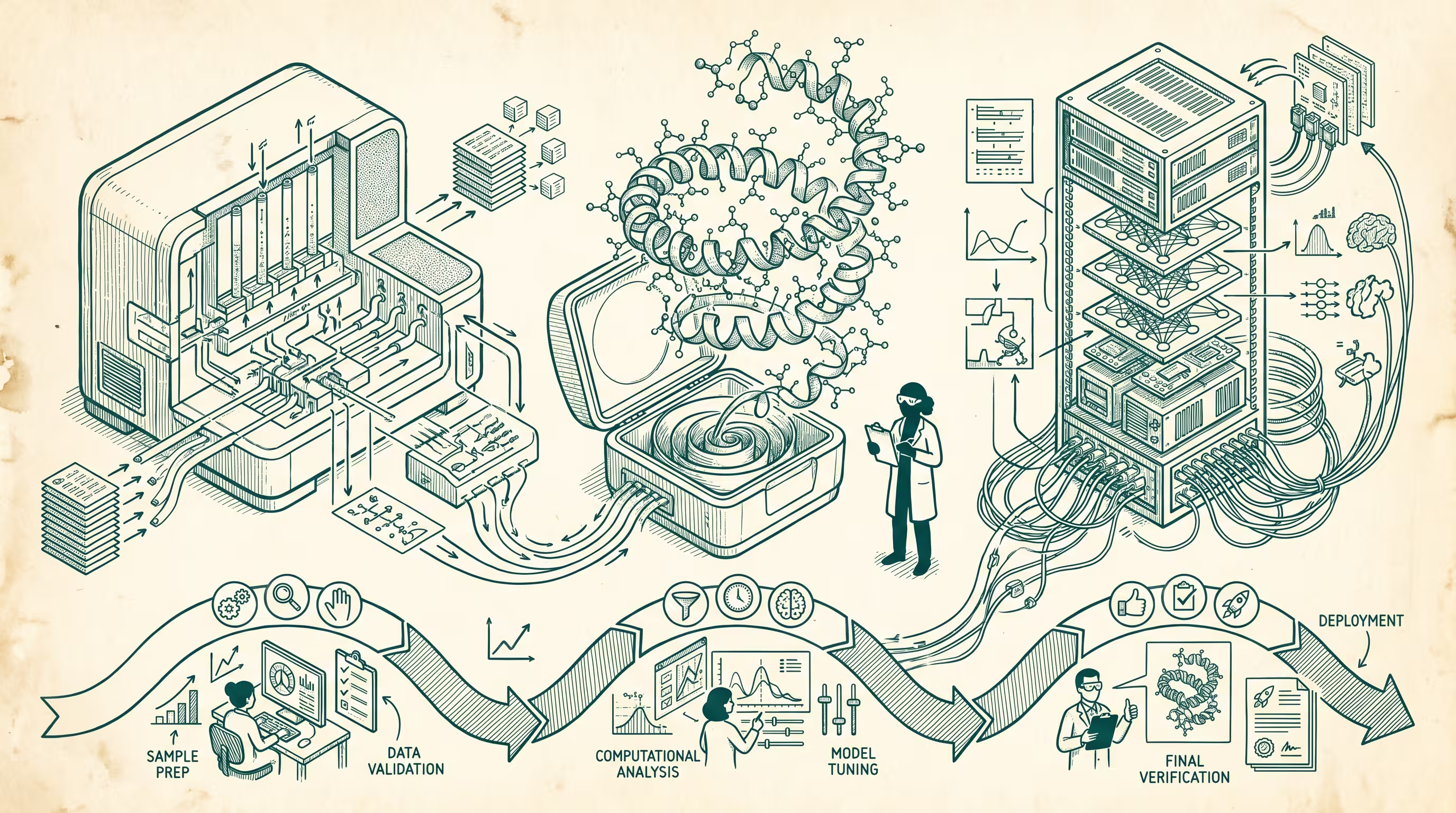

The shape is right. EvolutionaryScale's ESM-3, the Meta-team spinout, is a frontier model trained on the language of proteins the way GPT-4 was trained on the language of humans. Profluent is doing the same thing on a different slice of the same problem. Inceptive is doing the equivalent for RNA. Generate Biomedicines is running the inverse-design loop end-to-end. Recursion is running the cell-image foundation model. Atomic AI is doing RNA structure. Insilico Molecule has a small-molecule generative model in clinical trials already. The list is not exhaustive and will be wrong by a quarter from now in directions that strengthen the argument, not weaken it.

What is the right shape, exactly. It is the same scaling story we have already lived through once. A modality with enormous quantities of data, a representation that lets a transformer learn the structure, training compute that has gone from absurd to merely expensive in five years, and downstream tasks where the foundation model becomes the substrate for a thousand specialized applications instead of a thousand bespoke models trained from scratch. Proteins are a language. So are nucleotide sequences. So are the fluorescence patterns of cells under perturbation. The substrates that biology runs on turn out, inconveniently for our reductive metaphors and conveniently for our modeling tools, to be readable by transformers.

The timeline is longer than the discourse implies

Here is where the discourse gets it wrong. The chatbot wave had a fast feedback loop. You ship a model, somebody types into it, the response is good or bad, the next model is trained, eighteen months later the world has shifted. The biotech foundation models do not have that loop. The feedback signal is a wet experiment, a clinical readout, a regulatory filing, a years-long trial. The model can propose a thousand candidates by lunch. Validating one of them takes six months and several million dollars. The cycle time for the actually-useful version of the technology, which is the version that produces a drug or a diagnostic that goes into a person, is going to be measured in years, not in eighteen-month iterations.

That is what makes this a five-to-fifteen year story rather than a three-year story, and that is what is going to confuse the discourse for the entire stretch of it. The capability curve is going to look like the chatbot curve. The product curve is going to look nothing like it. The gap between those two curves is the strangest, most productive part of this decade for anyone who can sit in it without flinching.

Three checkpoints to watch

Three things are worth watching to know whether the bet is coming in on schedule or sideways.

First, whether the inverse-design loop closes for an antibody by 2027. By that I mean a designed-from-scratch antibody, generated by a foundation model, going into humans in a clinical trial with mechanism-validated binding. Generate, Absci, and a couple of internal teams at Big Pharma are racing here. If the loop closes, the capability curve has reached the substrate where the money lives.

Second, whether RNA-design models produce a self-amplifying therapeutic that gets to Phase 2 by 2028. The lipid nanoparticle plus mRNA stack is the platform. RNA foundation models trained on every published Cas/gRNA pair plus the structural data are the engine. The first self-amp therapeutic in humans was 2024. The first designed-by-foundation-model entry will tell us whether the platform compounds.

Third, whether the regulatory bodies build an evaluation framework for foundation-model-generated candidates that is faster than the framework for traditional candidates. FDA has been signaling appetite. EMA has been signaling appetite. If the evaluation framework actually compresses the trial timeline by even thirty percent, the cycle-time problem starts to dissolve and the whole shape of the category accelerates by half a decade.

These are not the only checkpoints. They are the ones I would put on a wall above the desk and watch.

Twelve years from now, fifteen at the outside, we will have foundation-model-designed therapeutics in routine clinical use, foundation-model-designed materials in industrial use, and a Kardashev-scale shift in what the biology stack lets us attempt. We will have a generation of biologists who came up trained alongside these models and will look at the pre-foundation-model era of biology the way I look at the pre-internet era of software, with a kind of affectionate disbelief that anything got done at all. The work is being done now. The discourse will catch up some time around 2027 and will frame the catch-up as a sudden arrival. The arrival was not sudden. It was inevitably going to take this exact shape if you squinted at the substrate the right way, which a few people did, and which the rest of us are about to.

—TJ