DOGE is the largest public-sector AI displacement test in history. The operator lessons are transferable.

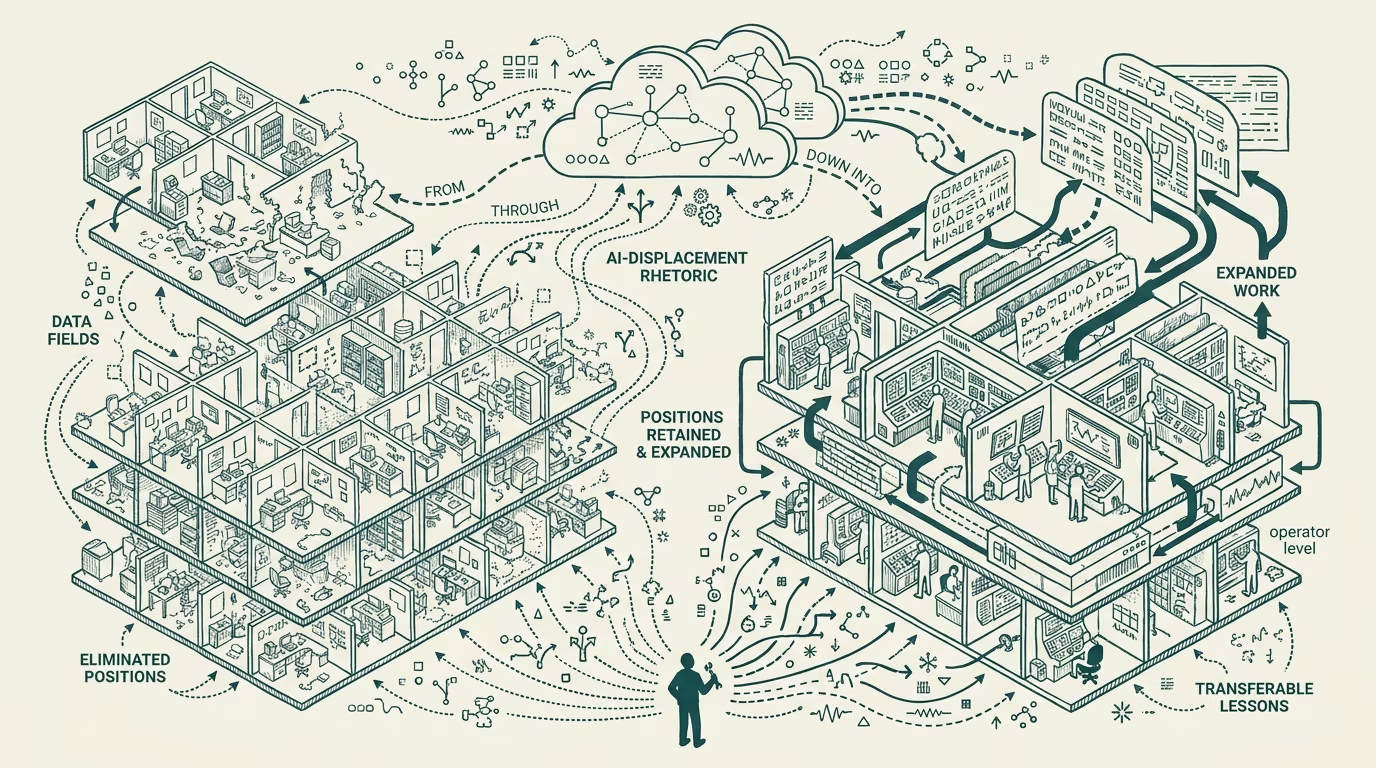

The Department of Government Efficiency federal workforce-reduction program through 2025 is the largest publicly-visible test of AI-driven workforce displacement in any government environment to date. The program reduced federal headcount by tens of thousands of positions across multiple agencies, with substantial public commentary about AI-driven efficiency as the underlying mechanism. The deployment data through the first half of 2025 has surfaced operator-class lessons that are transferable to private-sector workforce-reduction decisions, though the lessons are not the ones the program's framing implied.

The structural read is that the DOGE program was an org-design intervention with AI framing rather than an AI-driven displacement. The visible failure modes are recognizable to anyone who has run workforce-reduction work in private-sector contexts. The lessons that emerge are about the gap between AI-displacement framing and the actual mechanism of workforce-reduction, with the gap being substantial and the consequences being visible.

This essay walks the visible failure modes, the structural reason the framing was wrong, and the operator-class lessons that transfer to private-sector workforce-reduction decisions.

The visible failure modes

Three specific failure modes have been visible in the DOGE deployment data through 2025.

The first failure mode is that the positions cut were not consistently the positions AI can actually substitute for. The reduction was driven primarily by criteria that were not AI-displacement criteria: probationary status, tenure-tier criteria, performance-rating criteria from the prior administration's reviews, and various political-and-policy-class criteria that did not align with AI-substitutability. The positions actually substitutable by current AI capability (well-structured information-processing, document-routing, certain narrow analytical tasks) were not consistently the positions cut, and the positions cut included substantial categories of work that current AI capability cannot substitute for.

The second failure mode is that the remaining work substantially expanded rather than compressed. The visible operational pattern across the affected agencies has been that the reduced workforce is responsible for the same volume of work (or, in some cases, increased volume due to legal-and-regulatory requirements that did not change with the workforce reduction), with the consequence that the remaining workers are operating against substantially worse work-volume-per-person ratios. The expectation that AI would absorb the displaced work has not generally materialized at the deployment scale that would offset the workload increase.

The third failure mode is that the institutional knowledge displaced was disproportionately concentrated in the senior-tier and specialty-tier positions where the reduction criteria captured them. The institutional-knowledge loss has produced operational consequences (delayed permits, increased error rates, slower processing of standard workflows, cascading mistakes in regulatory contexts) that the AI deployment was not capable of compensating for.

The combined effect across the three failure modes is that the workforce reduction produced operational degradation rather than the efficiency gains the program's framing implied.

The structural reason the framing was wrong

The structural reason the AI-displacement framing was wrong is that the workforce-reduction criteria and the AI-substitutability criteria do not align. AI-substitutability requires careful task-level analysis of which specific work tasks the current AI capability can perform reliably, followed by reorganization of the workflow to allocate those tasks to AI and the remaining tasks to human workers. The DOGE program did not run this analysis. The program ran a workforce-reduction process driven by other criteria and applied AI framing to the result.

The specific work categories where AI deployment can produce meaningful efficiency gains in government contexts (claim-routing, document-classification, scheduling-and-coordination work, certain narrow regulatory-analysis tasks) were not the targets of the workforce-reduction. The work categories that were the targets of the workforce-reduction did not consistently match the AI-substitutability criteria.

The framing-vs-mechanism gap is recognizable to anyone who has run private-sector workforce-reduction programs that used efficiency-or-technology framing without doing the underlying task-level analysis. The result is the same in private-sector contexts: workforce reduction without efficiency gain, operational degradation, and the eventual recognition that the framing was disconnected from the mechanism.

The operator-class lessons

For private-sector operators considering AI-driven workforce-reduction programs, the DOGE deployment offers several specific transferable lessons.

The first lesson is that AI-displacement requires task-level analysis before workforce-reduction. The operator-tier running this work needs to identify the specific tasks the AI deployment can substitute for, the specific workforce capacity those tasks consume, and the specific reorganization required to allocate the remaining work effectively. The analysis takes substantial time and substantive engineering capacity. Operators who skip the analysis and run the workforce-reduction with AI framing produce the DOGE-pattern result.

The second lesson is that institutional knowledge has substantial operational value that workforce-reduction can erase quickly and that AI deployment cannot easily replace. The senior-tier and specialty-tier knowledge that organizations accumulate over years is structurally hard to substitute for, and workforce-reduction programs that displace this knowledge produce operational consequences that the AI deployment does not offset. Operators running this work need to identify the institutional-knowledge-class roles and protect them through the reduction process, which is operationally difficult and politically uncomfortable but operationally important.

The third lesson is that the cost-and-benefit analysis of AI-driven workforce-reduction needs to include the operational-degradation cost during the transition. The transition period (where the reduced workforce absorbs the increased work-volume per person while the AI deployment matures) produces measurable operational degradation that the cost-and-benefit analysis should price. Operators who do not price this cost into the analysis face the operational consequences that DOGE has surfaced publicly.

The fourth lesson is that the political-and-organizational-cover available for AI-framed workforce-reduction programs is meaningful but does not substitute for the underlying analysis. Programs that ride the AI-framing political cover without doing the underlying work produce the operational consequences regardless of the cover. The cover is helpful for political acceptability of the reduction; it does not change the operational outcome.

The DOGE program is the largest public-sector test of AI-driven workforce-reduction. The operator lessons are transferable to private-sector contexts. The lessons are not flattering to the AI-displacement framing the program ran, and the operator-level running this work in private-sector contexts should be reading the deployment carefully. The framing was not the mechanism. The mechanism was org-design intervention. The lessons available to operators reading carefully are about the gap between the two, with the gap being substantial and the consequences being visible to anyone watching.

—TJ