Four bolts went unrecorded. The CMES record-keeping was the safety control.

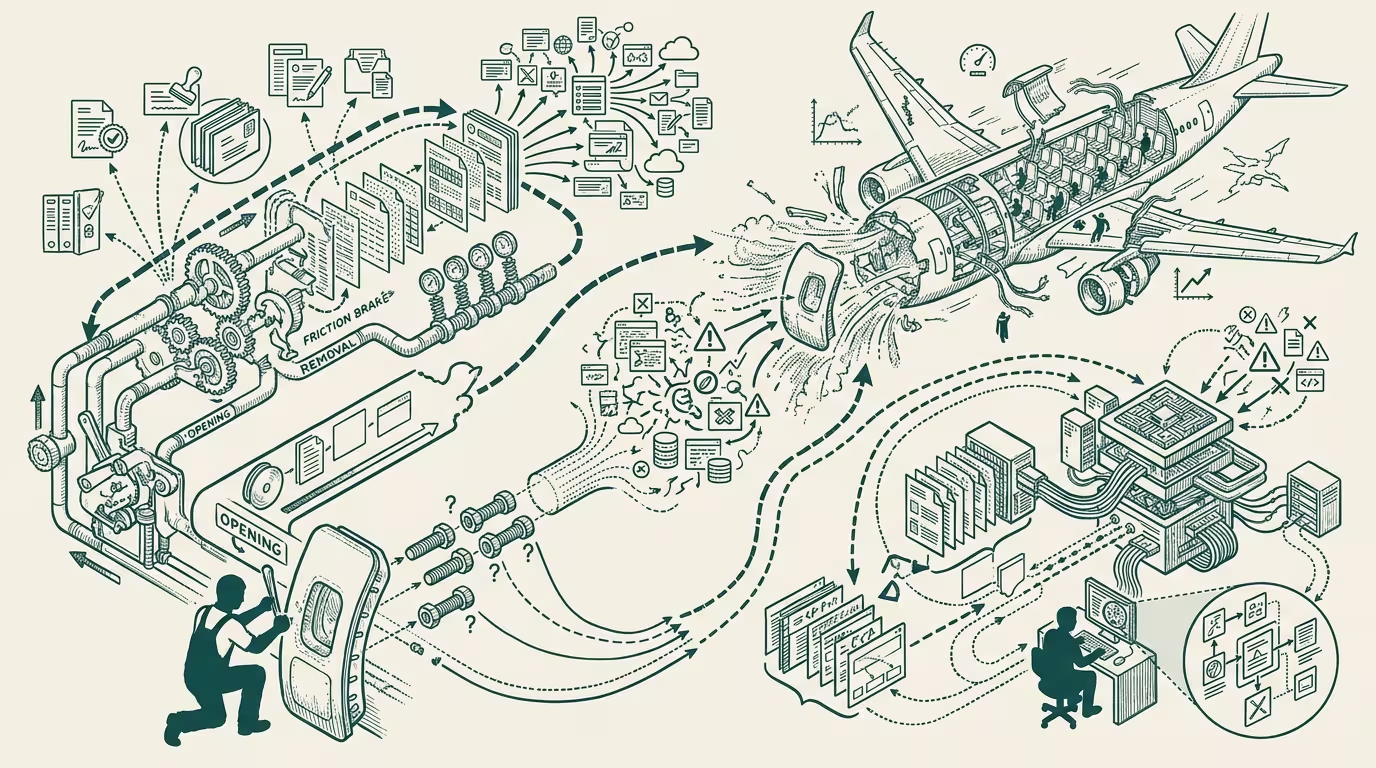

A mechanic on the Renton shift in 2023 has a door plug to deal with. The plug needs to come out so the team can rework something behind it. Two classifications exist in CMES: removal (which generates a safety-critical-bolt-removal record and triggers a documentation cascade) and opening (which doesn't). The plug-removal classification fits the operation. The opening classification carries less paperwork friction. The mechanic picks opening. The four bolts come out. The record is never created. The aircraft ships without bolts and without documentation that the bolts were ever removed.

That is, in retrospect, the moment Alaska 1282 became inevitable.

The NTSB final report on Alaska Airlines flight 1282 — the January 2024 door-plug separation incident — landed in mid-2025. The technical findings were specific: four bolts that should have secured the door plug were not installed when the aircraft left the Boeing Renton factory. The Hacker News engineering thread that followed the report's release ran several thousand comments deep. The most operator-relevant finding in the thread was not the missing bolts. It was the documentation convention that allowed the bolts to go unrecorded.

The documentation convention was the safety control.

The trade press read it as a Boeing-specific quality-management story. The part that holds is broader.

_Documentation conventions are load-bearing safety controls in any system where the documented record is the audit trail for safety-critical operations._ The pattern transfers directly to clinical-AI deployment, EMR audit logs, financial-services compliance records, and any operator running AI systems where logging conventions decide whether failures are recoverable.

The argument that holds is that when documentation is the audit trail, the documentation convention is the failure surface. A failure mode that does not generate a record is, in operating terms, a failure mode that the audit trail cannot detect. The recovery process — the post-incident review, the root-cause analysis, the corrective-action implementation — is calibrated to documented evidence. Failures without documented evidence are failures the recovery process cannot engage with.

What flows for AI-deployment audit logs is that they need to be explicit about what generates a record. A clinical-AI deployment running on top of an EMR creates audit-log entries when the AI's recommendation is accepted, when the recommendation is overridden, when the recommendation is queried by a clinician, when the underlying patient data is updated. Each of these is a logging-convention question that decides whether downstream safety reviews can detect failure modes. Operators deploying clinical-AI without explicit logging-convention specifications are deploying with the same documentation gap that produced the Alaska 1282 incident — failure modes that don't generate records and therefore can't be detected by the audit infrastructure.

What flows for predicting where the gap forms is the friction-asymmetry. The Boeing mechanics treated the operation as an opening rather than a removal partly because the opening classification was operationally lower-friction. Wherever the documentation-convention work creates operational friction, operators will systematically choose the lower-friction classification when the safety-relevant distinction is ambiguous. Healthcare-AI deployments with friction-heavy override-documentation requirements will produce systematic under-documentation of overrides. Financial-services AI deployments with friction-heavy compliance-disclosure requirements will produce systematic under-documentation of edge cases. The friction is the predictor of where the gap will form.

What flows for post-incident reviews is engagement with the documentation convention as a primary causal factor. Post-incident reviews that focus on the proximate cause (in the Alaska case, the missing bolts) without engaging with the documentation convention are missing the structural causal layer. The bolts were missing because the documentation convention let them be missing without leaving a record. Operators running post-incident reviews on AI-deployment failures should explicitly examine whether the documentation conventions produced the gap that allowed the failure to go undetected. The convention-layer review is, in operating practice, the layer most often missed in post-incident analysis.

The same pattern recurs across every operator-class deployment in regulated categories. The Alaska 1282 case is the cleanest visible 2024-2025 case study because the engineering-class engagement on Hacker News produced public-discourse-readable analysis of the convention-layer failure. The pattern recurs in clinical-AI logging, in financial-services compliance audit-trail systems, in autonomous-vehicle safety-event recording, in cybersecurity-incident response systems. Each category has its own version of the CMES gap, and each category's safety posture is calibrated to the documentation conventions the operator class has implemented.

What survives all of this is that the Alaska 1282 case is one of the cleaner public-engineering markers of documentation-convention-as-safety-control failure, the operator-tier lesson transfers directly to AI-deployment audit-log discipline, and the structural improvement is to specify documentation conventions explicitly rather than to leave them implicit in the operational workflow. Operators who specify the conventions explicitly capture the safety-recovery capacity that the documentation provides. Operators who leave the conventions implicit absorb the failure modes that fall through the documentation gaps.

Four bolts went unrecorded. The CMES record-keeping was the safety control. The lesson generalizes to every operator system where the audit trail is the failure-detection mechanism. AI deployments with implicit documentation conventions are operating with the same gap. The fix is the same fix Boeing should have implemented in CMES — explicit conventions, low-friction documentation paths, and post-incident engagement with the convention layer rather than just the proximate cause.

—TJ