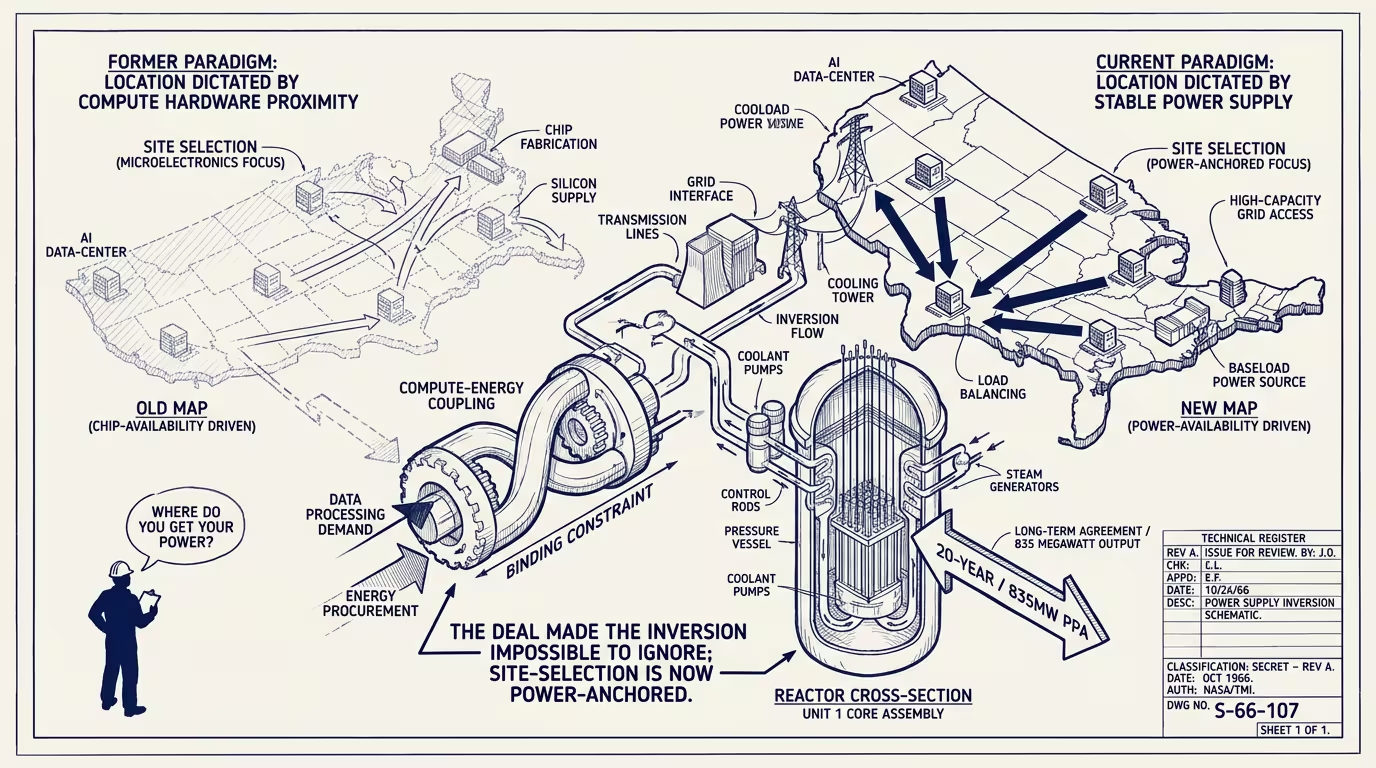

Microsoft leased a nuclear reactor. The grid became the new chip.

In September 2024 Microsoft signed a 20-year, 835-megawatt power-purchase agreement with Constellation to restart Three Mile Island Unit 1. The plant had been shut for five years. Restarting it requires multi-year regulatory clearance, hundreds of millions in refurbishment, and a labor force that has to be rehired from a workforce that mostly retired during the shutdown. The unit will not deliver power until 2028. Microsoft signed the deal anyway because, in operational terms, the company had run out of other options for AI-data-center power on the timeline its capex plan required.

That deal is the visible proof of an inversion that had been building inside the AI-infrastructure category for two years. The deal made it impossible to ignore.

The inversion is this. For most of the cloud era, compute was the constraint and energy was the abundance. A data center went where the network was, where the labor was, where the tax incentives were. Power was a cost line, mostly small relative to land and labor and the depreciation of the chip fleet. The site-selection conversation was about latency to customers and connectivity to the network backbone, and the power question was a checkbox somewhere in the procurement deck.

By 2024 that had inverted. AI compute, specifically GPU-class compute at hyperscaler scale, draws power per rack at multiples of what a 2018 server rack drew. A single H100-class data hall consumes the power of a small American suburb. The site-selection map for new AI data centers is no longer a question of latency-and-labor-and-incentives; it is a question of which counties have grid interconnection capacity available, which utility commissions have approved transmission expansions, and which generation sources can underwrite a multi-decade power-purchase agreement at the magnitudes the hyperscalers need.

The grid became the new chip.

That phrase is the right operator framing because it captures the substitution. In the 2010s the bottleneck on a hyperscaler's expansion plan was procuring chips at scale. Nvidia held the bottleneck and captured the rents. In the 2020s the bottleneck shifts to procuring power at scale. Whoever holds the power-procurement bottleneck captures the next decade's rents. That has implications for the regulated utilities, for the independent power producers, for the nuclear renaissance the industry is quietly pricing in, and for the geographic distribution of the next generation of AI infrastructure.

Three implications worth dwelling on.

First, the site-selection map inverted from "latency-first" to "power-first." A 2024 data-center buyer looking at a Loudoun County, Virginia location is finding that the grid-interconnection queue extends past 2028 and that the local utility cannot underwrite the 500-megawatt load growth the buyer needs. The buyer who would have signed in Loudoun in 2018 is now signing in regions where the power is available and the latency is acceptable: south Texas, eastern Pennsylvania near nuclear plants, parts of the Mountain West with stranded hydroelectric capacity, parts of Iowa with dispatchable wind plus battery. The geographic distribution of new AI data centers in 2025-2027 looks materially different from the 2010s distribution, and the difference is the power map.

Second, the nuclear renaissance is no longer hypothetical. The Microsoft-TMI deal is the visible one. Behind it, every major hyperscaler is in active conversation with every operating U.S. nuclear plant about long-term PPAs. Several are in conversations about restarting shut units. A handful are in early-stage agreements with small-modular-reactor vendors for 2030s deliveries. The economics that did not work for nuclear in 2015 work in 2024 because the hyperscaler's willingness-to-pay for firm baseload power, on a 20-year term, is materially higher than what the wholesale grid has been paying. Nuclear-plant economics, recalibrated for hyperscaler offtake, become viable at price points that the open market never offered. The structural shift is happening on the contract terms.

Third, the regulated-utility relationship with the hyperscaler is being repapered. A data-center load that grows from 100 megawatts to 800 megawatts over five years is, for a regulated utility, the largest single customer expansion the utility will face in a decade. The utility's regulator decides whether the cost of the transmission upgrade is borne by all ratepayers (which raises everyone's bill to subsidize the hyperscaler) or borne by the hyperscaler directly (which makes the data center economics tighter). That regulatory decision, replicated across thirty state utility commissions over the next four years, is one of the most consequential infrastructure-policy questions the U.S. is going to face. The hyperscalers have, of course, the lobbying budget to influence the answer. The ratepayers do not.

The cost calculation an operator running production AI in 2025 has to do has shifted as a result.

In 2022 the operator's compute-cost calculation was: how many H100s do I need, what does Nvidia charge, what is my cloud markup. The compute was the load-bearing line. In 2025 the calculation is: how many H100-equivalent GPUs do I need, what is my access-to-power constraint look like over the contract term, what is my cloud provider's exposure to power-cost-pass-through clauses, and what does my unit-economics look like if my cloud's average power cost rises 30% in the next 36 months. The power-cost line is now a meaningful fraction of the compute-cost line, and the operator who has not modeled it is, in 2025-2026, going to discover the gap on a quarterly cloud bill that surprises the CFO.

The harder operator-class question is what the substitution-shape implies for the next platform-tier company.

In the chip era, Nvidia captured the rents. In the power era, the rent-capture is structurally different because power is a regulated category and Nvidia was not. The most likely shape is that no single company captures all the rent the way Nvidia did. The rent gets distributed across the regulated utilities (some), the independent power producers (some), the hyperscalers themselves (the ones who lock in long-term PPAs at 2024-favorable rates), and the SMR vendors that ship deliveries in the 2030s (a smaller capture but a long tail). The new platform-tier company in this era looks like a vertically-integrated power-and-compute operator that owns generation, owns the data center, and sells AI compute as a commodity from that integrated stack. That company, in late 2024, exists in fragmentary form across several hyperscalers' planning teams and one or two independent operators in early-funding rounds. By 2030 it is one of the most valuable infrastructure companies in the world.

The thing that crosses pillars is that the AI-platform conversation has to expand its scope. The conversation that has been happening for three years is "which model wins, which lab wins, which agent runtime wins." That conversation continues. Underneath it, a separate conversation is starting: which power-procurement-strategy wins, which utility-relationship wins, which generation-source wins. The operator running production AI in 2024 who is not paying attention to the second conversation is the operator whose compute costs surprise them in 2026.

The energy question is, of course, the kind of infrastructure question that does not show up in the trade-press AI-news cycle. The power-purchase agreement is signed, the press release names the hyperscaler, the trade press writes a one-day story, and the rest of the implication is left for the operator to figure out. Microsoft signed in September 2024 because the implication was already operational. The operators who saw the signal early are the operators whose 2027 compute economics look much better than their competitors'. The operators who read the trade-press story as one of many announcements are the operators whose 2027 compute economics look like they did not see this coming, of course.

Energy is the new chip. The grid is the four-year overhang. The operators who priced this in 2024 are the operators who run the platforms of 2030. The operators who treated the Microsoft-TMI deal as one of many infrastructure announcements are, by structural inevitability, in a different position. Both outcomes were predictable from the substrate-shift. Neither feels sudden from the inside. The substrate-shift is, of course, the kind of thing that always feels gradual until it doesn't.

—TJ