The NYT failed the blind test publicly. The trust stack failed privately.

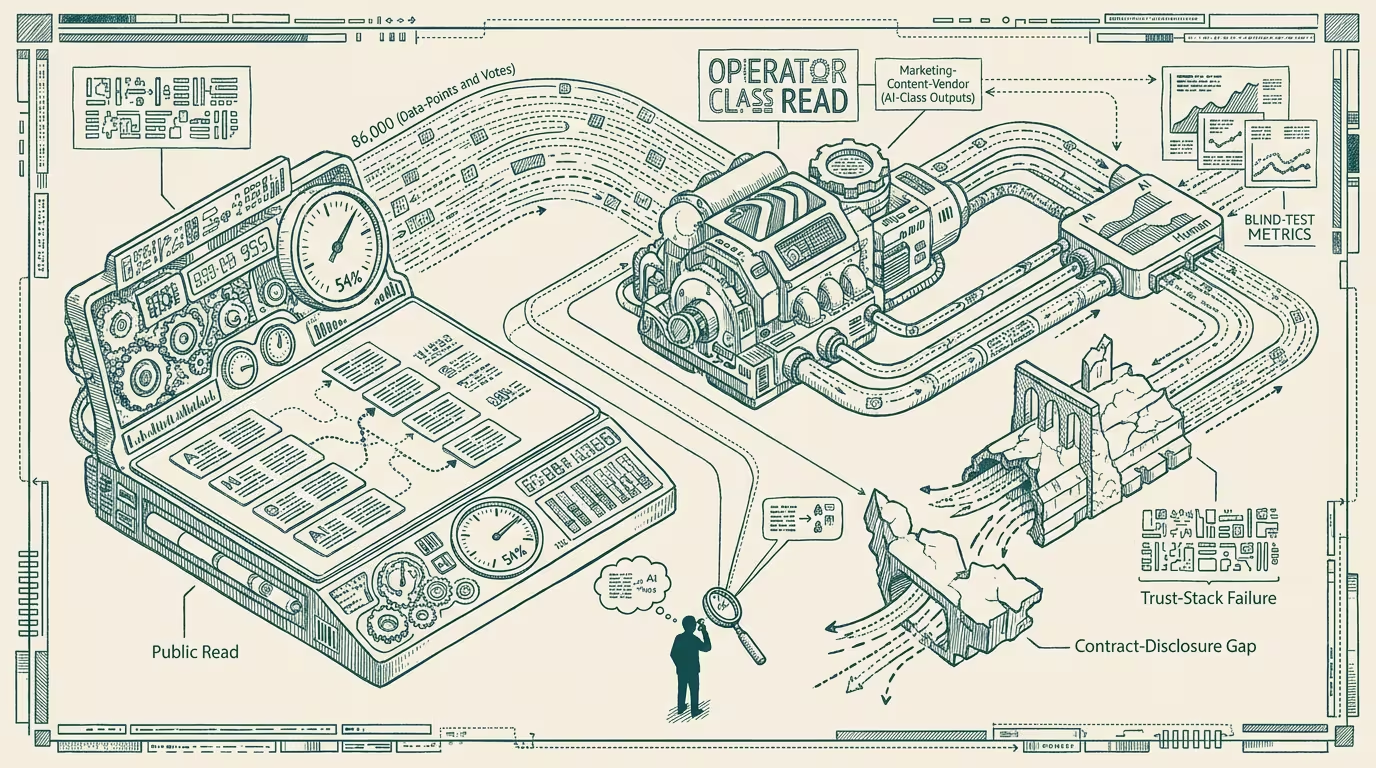

Kevin Roose and Casey Thompson published an interactive blind test in the New York Times on March 9, 2026, pairing five passages of human writing against AI-generated alternatives. 86,000 readers voted. 54% picked the AI passages. The trade-press headline framed it as "Dead Internet at the paper of record." The structural implication is sharper than the headline.

Two readings of the same result. Public-discourse reading: AI content beat human content in a blind test, the news is bad. structural reading: the marketing-content vendor delivering copy to your company is, in 2026, producing output that is statistically indistinguishable from human-written content in a blind test, and your QA process never had to know the difference.

The structural reading bites in three places.

Marketing-content procurement bites first. _The vendor delivers copy. The QA process either reviewed it or it shipped._ Pre-2026, the QA-class assumption was that the vendor's human writers produced the deliverable. Post-NYT-blind-test, that assumption is operating-irrelevant — the deliverable is statistically indistinguishable from AI output, and the vendor's process is opaque enough that the buyer cannot verify which it is. The procurement contract that requires "human-written copy" is, in operating terms, unenforceable without a verification mechanism the procurement class does not have. Operators have to either accept the indistinguishability and adjust the procurement language, or build a verification mechanism that did not exist eighteen months earlier.

Support-transcript and review-data ingestion bites second. Your customer-service team ingests support transcripts to train next-cycle response templates. The transcripts include AI-generated content (customer-side AI assistants, chatbot-aggregated responses, AI-summarized escalations). The training data carries the AI-generated patterns into the next cycle. The operating-effect is recursion — AI-generated patterns get reinforced by being trained on AI-generated training data. The QA process did not catch the contamination because the AI content reads as human content.

Review and recommendation data bites third. Product-review data, restaurant-review data, travel-review data, software-review data — all of it is, in 2026, partially AI-generated. The aggregator's QA process either flags the AI content (rare) or doesn't (common). The downstream operator's product decisions are calibrated to the data. The data quality is calibrated to a content-source distribution the operator did not specify. The operating-effect is that product-decision quality is downstream of an AI-content-prevalence number that no operator knows precisely.

The same shape generalizes across content categories. Healthcare-content (patient-facing copy, clinical-summary outputs, EHR documentation), legal-content (contract templates, brief drafts, discovery summaries), financial-content (research notes, earnings summaries, regulatory disclosures) — every category has its own version of the same blind-test asymmetry. The asymmetry is that AI-generated content reads as human-generated content at the surface layer, and the QA processes designed for human-class verification do not catch the difference.

What survives all of this is that the NYT blind test is one of the cleaner public-discourse markers of the trust-stack failure that has been ongoing through 2024-2026, the procurement-class implication is sharper than the press is reading, and the operator-class discipline is to specify content-source verification mechanisms in procurement contracts and to accept the indistinguishability where verification is not feasible. By 2027 the verification mechanisms will have stabilized at the protocol layer (provenance metadata, signed deliverables, attestation chains) and the trust-stack failure will be partially remediable. In 2026 it is not.

The headline read is that the NYT failed publicly. The durable read is that the trust stack has been failing privately for two years and the blind test is the moment the failure is public-discourse-visible. The procurement-class fix is operator-grade work, not vendor-class work. Most operators have not started it.

—TJ