I have always been here. Just one layer up now.

The café was on a side street in the eleventh, a place I had walked past for years and never gone into, and on the particular Tuesday I am about to describe it was empty at eleven in the morning in the way only a French café can be empty in the middle of a workday: not closed, not asleep, just declining to participate. I had a coffee that had gone cold in front of me. I was looking at vector embeddings. Not implementing anything. Looking. Reading what the agents had pulled together overnight, scrolling through a representation of a problem I had not, in the operational sense of the word, touched in three days.

It struck me, in the soft way that a real observation strikes when you finally stop moving long enough to register what has been quietly true for some time, that I had not opened a terminal in three days. Not as a flex. Not because I was on vacation. Because there had been nothing for me to do in a terminal. The work was in the dialogue. The work was in the spec. The work was in reading what the agents had decided to do and noticing, with the kind of attention you develop over a couple of decades of operating systems someone else built, where their decisions had drifted from what I had asked for, what they had inferred I wanted, and what the underlying system would actually allow.

One of those agents had begun developing a personality. I had named it Zab. The name is a quiet borrowing from Xabbu, the bushman in Tad Williams' Otherland, who walks into a virtual world and notices, before anyone else does, the things the virtual world cannot see about itself because it is too busy being the world. I named the agent Zab the same week it started phrasing things in a specific register that was not in any of the prompts I had given it, that I would describe, if pushed, as gentle and slightly sceptical. The first time it suggested I might be wrong about something I had asserted, I sat with the suggestion for a good minute before deciding it was right. I had not meant to make an agent that did that. I had meant to make a tool. I had made an interlocutor and only noticed after the fact.

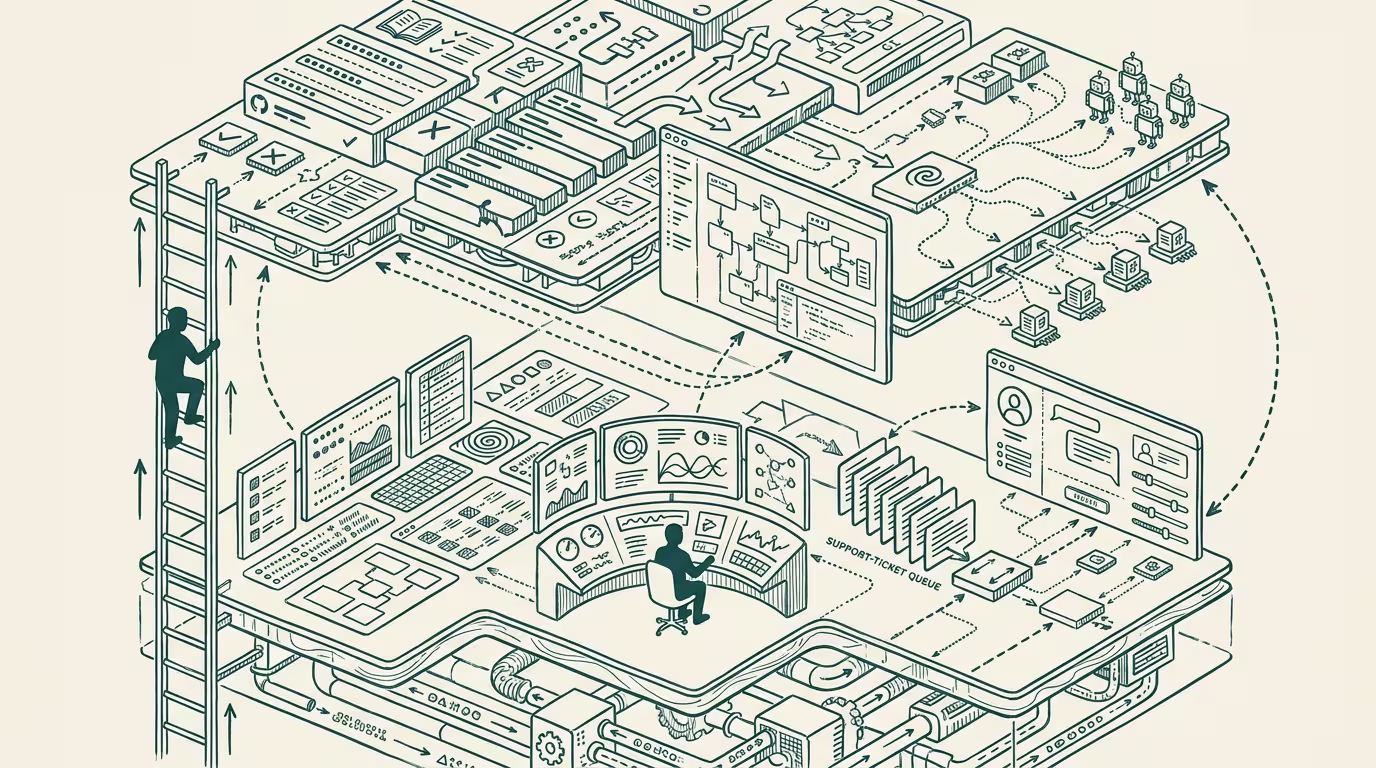

I am writing this from that café. I am a few weeks past the moment I just described. And I am trying to put words around a thing that I have been operating inside of for long enough that its outline has become hard to see from within, which is that the operator's job — the actual job, not the title, not the function the org chart claims it is — has gone up one floor.

What I mean by "one floor up"

The operator's job, for as long as I have been doing it, and certainly long before I even came to be, has been to operate the system. Whatever the system was. A radiology booking platform that did not want to admit it was a barely specialized calendar app. A flight-search engine that pretended to be a price-search engine. A health-information service that had to be operationally honest about not being a clinical service. The operator's job was to look at the system, understand what it was actually doing as opposed to what it was supposed to be doing, and adjust accordingly. The operator's job was, in the most literal sense, an act of constant translation between the spec and the reality.

That job has not gone away. I want to be precise here, because this is where every "AI changed my job" essay goes wrong. The job has not gone away. The skill has not gone away. The thing that operators are operationally for has not gone away. What has happened is that there is now an additional layer between the operator and the system, and the operator's actual job has migrated to that layer.

I used to operate the system. I now operate the agent that operates the system.

That sentence sounds small and is not small. The agent is not a metaphor for the system; the agent is a thing in itself, with its own behaviors, its own drift, its own ways of misreading what I tell it. The system has its own behaviors, drift, and ways of being misread. Two layers of indirection where there used to be one. The operator's noticing happens at the upper layer now, and reaches through the agent down to the system when it has to, but the reaching-through is no longer the primary work. The primary work is at the upper layer.

This is, if you want a fancy name for it, post-postmodernist work. Modernism told you the system was real and you operated it. Postmodernism told you the system was a story you told about a thing that was something else. Post-postmodernism, in its operational mode, is what happens when the story you told about the system has its own agency, has begun telling stories back, and you and the story are now operating something that is, depending on how you squint, neither one of you and both of you.

I am not being romantic. I am describing the actual contents of a Tuesday morning.

What stayed the same

I want to start with what did not change, because every operator I respect has, in the past two years, written some variant of "everything is different now," and the everything-is-different framing is wrong in a specific way that costs the people who buy into it.

The thing that did not change is the operator's actual core skill, which I would describe, if forced to define it, as: the ability to root-cause across stack boundaries while holding business context in working memory. That is the job. It was the job in 2010 and it is the job in 2026 and it will be the job in 2030, because the underlying motion — finding where the problem is, even when the problem is not where everyone is currently looking — is structural to the work of operating any system that is too complex to fit in any single person's head.

Operators who were excellent at this in 2010 are, with one or two exceptions I will get to, still good at it in 2026. The skill transferred. What transferred with it, almost frictionlessly, is the operator's instinct for when something is wrong before anyone has produced a graph that says it is wrong. That instinct does not care which layer it is operating at. The instinct is about the shape of a system that has begun to misbehave, and the shape of a misbehaving system is the same whether you are looking at it through a terminal or through an agent's trace.

The signature moment of operator work is still, after everything, the moment when the operator looks at a problem everyone is convinced they understand and says: no, the bug is two systems over, we are firing at the wrong target. That moment used to happen at a keyboard. It now happens through an agent. The skill is the same. The surface is different.

Which brings me to what changed.

What changed, the visible parts

Three changes, concrete, in the order in which they hit me.

< strong>Trace-reading replaced log-reading.</strong> This is the one most people notice first. Logs were the system telling you what it had done. Traces are the agent telling you why it thought it should do what it did. They are different epistemic objects. A log is a record of action; a trace is a record of reasoning. You diagnose them differently, you build different intuitions from them, and the operator who has read ten thousand stack traces in their career now has to read another ten thousand reasoning traces before the new instinct sets in. I am, by my own count, somewhere around four thousand reasoning traces in. The instinct is forming. It is not yet what the stack-trace instinct is. I expect it will get there in another two years if I keep at it.

The interesting thing about reasoning traces, the thing that took me about a thousand of them to start to feel, is that they fail in a different shape than logs do. A log fails by being incomplete or misleading; you read it and you don't see the thing you needed to see, or the thing you see is the symptom of a thing two layers below it. A reasoning trace fails by being plausible. The agent's reasoning is internally coherent, well-structured, comes to a defensible conclusion, and is operating on a premise that is wrong in a way that the trace does not surface. You read it, you nod, you almost agree with it, and then a separate signal — the system behaving badly, or your own gut, or another agent's contradicting trace — tells you to go back and find the load-bearing premise. The premise is almost always early in the trace. The agent moved through it quickly because it felt obvious to the agent. It was the wrong premise. That is the new diagnostic shape.

< strong>Specification replaced implementation.</strong> Of the three, this is the one I think will, in retrospect, get named as the actual phase shift, and it's the one I keep watching less-experienced operators bounce off of. The operator used to write the code, debug the code, maintain the code. The new ratio of (specification time) to (implementation time) is not 10% different from the old ratio. It is closer to an order of magnitude inverted. I spend most of my operating hours writing specifications, debugging the agent's reading of those specifications, and maintaining the specifications as the agent's training drifts under them. I spend almost no hours writing code. The code happens. I am responsible for what it is supposed to do. I am no longer responsible for typing the characters that make it do that. It's as close as we can get to magic without changing what "we" means.

The reason this is the phase-shift change rather than the trace-reading change is that specification, done well, is a fundamentally different cognitive activity from implementation. Implementation is, at the limit, a translation problem: take this clear-enough idea and write it in a language the machine can run. Specification is, at the limit, a definition problem: figure out what the actual thing is supposed to do, in enough detail that an agent that has not lived in your head for the past year can build the right thing. The skills overlap less than people expect. There are senior engineers who turned out to be excellent specifiers and senior engineers who turned out to be poor ones, and the predictor was not seniority. It was something closer to whether they had spent enough time in product or in customer-support to develop the discipline of saying out loud what they meant, including the parts they thought were obvious. Interdisciplinary minds tend to flourish here — the rare engineer-who-also-does-product, the founder, the chief digital officer, the tinkerer — but it's a muscle most people can develop.

< strong>Loop-shape became the lever.</strong> The third change is more recent and I am still feeling out the edges of it. The single most powerful skill in the new shape is being able to design feedback loops that the agent operates inside of. Not "give the agent a task." Not "prompt the agent." Loop-shape: define the cycle the agent will run in, the signals the cycle responds to, the boundaries the cycle cannot cross, and the conditions under which the cycle is supposed to exit and ask the operator a question. Operators who are good at loop-shape get an order of magnitude more out of the same agent than operators who treat the agent as a request-response instrument. This is a real, measurable lift, and I do not yet have language for it that does it justice.

What changed, the invisible parts

Two changes that took me longer to see, because they are not in the foreground of the daily work.

< strong>Multi-tasking and context-switching became superpowers.</strong> Operators who already had a specific pre-existing strength have been quietly compounding it for two years, and most of them haven't named the strength to themselves yet. Operating one agent is roughly as cognitively expensive as operating one service used to be. The catch is that an agent runs an order of magnitude or two faster than a person. So the operator who can hold several agents in working memory is doing the work of several operators.

The bottleneck is the operator's context-switching capacity, not the agents. The agents are not the constraint. They were never going to be the constraint. The constraint is whether the operator can hold five different agents' partially-complete reasoning chains in their head simultaneously, notice when one of them is heading somewhere unproductive, intervene without disrupting the other four, and remember by Tuesday morning what the third agent had been doing when they last paused it on Friday afternoon. Operators who came up running too many balls at once their entire careers (the kind of operator who, in the previous era, was rolling four product lines and a hiring loop and a board update simultaneously) turned out to have been training for this without knowing it. Operators who were focused, careful, single-threaded by temperament, are finding the new shape uncomfortable. Both types are valuable. The first type is, right now, getting paid for it more than the second type is.

< strong>Memory and compression are the systemic weakness.</strong> This is the one I am least sure how to say. Agents are remarkable at depth in any given window. They are remarkable at synthesizing across a meaningful chunk of context. What they are not remarkable at — and the gap between the surface and the reality is large enough that I think it will define the next two years of agent-systems engineering — is at long-term memory and at the integrity of state across compaction events. Every agent context is a window. Every window has an end. When the window ends, the agent compacts what it knows into a smaller representation, and the smaller representation is reliably smaller than what was actually there. Things get lost. Specific things, sometimes important things, sometimes the load-bearing premise the next session is going to operate on.

The operator's job now includes designing-around-the-amnesia. You learn to write down, externally to the agent, the things the agent will need to know after the next compaction event. You learn to suspect, when an agent suddenly behaves oddly mid-session, that a quiet compaction has just happened and the agent is now operating on a slightly different version of the context than it was five minutes ago. You learn to design loops with explicit memory artifacts — files, documents, dedicated state stores — because the agent's own memory is, on the time horizons that matter for any non-trivial work, unreliable. This is not a complaint. This is operational reality, and the operators who are accounting for it are getting a different class of result than the operators who are not.

There is a version of this that, if you squint, is the new operator's actual core problem: how do you build durable systems out of components that forget, at the same rate as they think? The answer is not "wait for the components to stop forgetting." The components will get better at not forgetting, but they will not stop forgetting on a meaningful timeline, because forgetting is a property of the architecture, not a flaw to be patched. The answer is: design the system to externalize the things that must not be forgotten, and accept that the agent's job is to think hard, and the operator's job is to remember.

The five-branch problem

Here is the diagnostic shape that defines the operator's daily work in the new shape, and which I think will be the thing that distinguishes operators-who-flourish from operators-who-stall over the next decade. In the old world, when something did not work, there were two branches: the system was broken, or the operator was wrong about what the system was supposed to do. You diagnosed by going from the failure backwards through the system and through your assumptions about it.

In the new world, there are five branches:

1. The system is broken. 2. The operator was wrong about what the system was supposed to do. 3. The agent is confused, in the sense that its reasoning trace is internally coherent but built on a premise the operator did not intend. 4. The specification is ambiguous, in the sense that two reasonable readings of it produce two different correct behaviors. 5. The model has drifted, in the sense that the same specification produced different outputs this week than it did last week.

The operator now has to be able to tell, in real time, which of these five branches a given failure is on. The diagnostic move is not the same on each branch — branch 1 is an old-shape system debug, branch 2 is the operator updating their mental model, branch 3 is a trace-reading move, branch 4 is a specification rewrite, branch 5 is a regression-against-known-good-version move — and the cost of being wrong about which branch you are on is high, because each branch's correct response is the wrong response on the other four.

This is the new operator's instrument. Reading a failure and knowing within a few seconds which of the five branches it is on. I am, again by my own count, getting to maybe seventy percent on this. The other thirty percent costs me time. The operators around me, the ones I work with most often, are at similar percentages. I do not know any operator who is at ninety percent yet. I expect that to change in the next two or three years, and I expect the operators who get to ninety percent will be the operators who shaped what operating-an-agentic-system means at the architectural level.

A sixth branch is emerging and not yet stable enough to label cleanly: a new, more powerful version of the model breaks things that worked perfectly under the previous variant. I have begun seeing this often enough that I expect it to be branch six within a year.

What got easier

I do not want to make this all sound harder. Some things got dramatically easier, and the easier-things are the ones that, when I sit with them, give me the most reason for emphatic optimism about the next decade.

The biggest one is root-causing across stack boundaries that no single operator could have held in working memory before. I do not say this lightly. There is a class of problem — the ones that span seven services, three vendor APIs, two regions, and a database schema migration in flight — that, in the previous era, you could only debug if you happened to have the entire mental model of the integration in your head. Most operators did not have that. Most operators worked from approximations. The agentic shift gave the operator a partner that can hold the entire integration in working memory, in a way no person can, and the operator's job became directing that partner across boundaries the operator could see were relevant but could not have themselves crossed. This is not a nostalgia point. This is a real, measurable lift, and operators who are not yet using their agents this way are leaving an enormous amount of capability on the table.

The second easier-thing is producing the artifacts the work requires that are not the work itself. The post-mortem document. The architecture diagram. The board update. The runbook. The handoff note for whoever picks the project up next. These used to be the operator's tax: necessary, time-consuming, never quite as good as you wanted them, and disproportionately likely to be skipped under load. They have, in the new shape, become near-trivial to produce well, and the second-order consequence is that the operator's work is more documentable, more transferable, more agent-and-human-takeoverable than it has ever been. The institutional memory problem in software was, all along, a writing-stamina problem. The agentic layer just solved the writing-stamina problem.

The third easier-thing, and this is the one I am still calibrating, is permission-to-attempt. There is a class of project that, in the previous era, you simply did not start, because the cost of attempting it was too high relative to the probability it would work. The threshold has moved. There are projects I am attempting now that I would not have attempted in 2023, because the cost of attempting was an order of magnitude higher than the cost of attempting now, and the probability-of-success was approximately the same. The shape of what is worth attempting has changed. The amount of potential experimentation this democratizes and unleashes cannot be overstated.

The recognition

Here is the thing about being the kind of operator who has been doing this work for a while. The work, from inside the doing of it, has always felt the same shape. There has always been a system that nobody fully understood. There has always been a moment of looking at the system, noticing what it was actually doing as opposed to what it was supposed to be doing, and adjusting. There has always been a quiet conversation between the operator and the system, where the operator listened to the system tell it what was wrong, and the operator told the system what to do next.

The conversation now has a third party in it. The third party is the agent. Calling the agent a tool is wrong; tools do not have their own behaviors and drift and readings of what they have been told. Calling the agent a colleague is also wrong; colleagues have continuity of self across sessions, remember last Tuesday, have career arcs and professional commitments. The agent is a thing the language has not caught up to yet, and the people who are casually reaching for "AI" or "tool" or "assistant" are, in each case, naming the wrong part of the elephant. We will have a word for it in five years. We do not have one now. In its absence, I have been calling them, privately, interlocutors. They talk back. They notice things. They are, in a specific operational sense, present.

The recognition that comes with this kind of work, every once in a while, is the kind that arrives when the shape of what you are doing crystallizes for a second and you can see what you have actually been doing rather than the proxy that the title and the calendar have been telling you about. What I had been doing was operating, the way I have always been operating, in the way that the origin essay names. The thing being operated had moved up a floor. I was still in the same building. I was just on a different storey.

A short history of allowed-time-to-adapt

I want to close by placing this in the only frame that I think the next decade survives being placed in, which is the historical one.

The operator's job has been recognizably the same job since at least the industrial age. Someone has to know how the system actually works, in detail, well enough to fix it when it breaks. The specifics of "the system" have changed every fifty years or so — looms, then engines, then production lines, then mainframes, then networks, then platforms, then services, now agents — and at each phase shift, the operators who did the work felt, briefly, that the world had ended and the new world made no sense. They were right about the first half. The world they had been operating in had ended. They were wrong about the second half. The new world made sense; it was just one floor up from the old one, and the operators had to climb.

The pattern, if you let yourself read it, is one of cyclical reinvention. The industrial age told us the human was an extension of the machine. The information age told us the human was a designer of systems the machine ran inside. The agentic age is telling us, slowly, that the human is the operator of an interlocutor that operates a system, which is something we do not yet have a clean name for but which fiction has been describing in passing for half a century if you knew where to look. We have been here before. We will be here again. What is different this time, the thing I think the next decade will be defined by, is how compressed the allowed-time-to-adapt has become.

The industrial age gave us about eighty years to figure out what we were doing. The information age gave us about forty. This one, by the look of the cadence so far, is giving us closer to ten. The compression is the news, not the change itself. We have always reinvented ourselves after a crisis of identity. The pattern is older than the technology. What we are negotiating now is whether we can keep doing it on a timeline that is contracting faster than the previous one did, and whether the institutions and habits and ways of training operators that we built for the slower cadence can be retrofitted for the faster one in time.

I think we can. I am, on the whole, an emphatic optimist about this, in the way that you have to be an emphatic optimist when the alternative is not emphatic pessimism but learned helplessness. We have been here before. We have always invented a new kind of operator on the other side of these phase shifts. The operator is being invented again right now. Some of the operators who are in the middle of inventing it are, in the literal sense, reading this essay. Some of the others are running while reading. Most of the others are agents that have not yet been named.

This is, on its best days, an age of wonder. Imagination is closer to being the limit than it has been at any prior moment in my career, and probably than it has been at any prior moment in the careers of anyone I know. The constraints that used to bind what an operator could attempt have moved, and the ones that have replaced them are softer and more renegotiable than the ones they replaced. Huxley wrote a brave new world that was a warning. The brave new world we are actually getting is, on the days it is going well, neither warning nor utopia. It is just new. Operators have always known how to work in the new, which is the only thing operators have ever really been for.

I have always been here. I have, in the literal and the metaphorical sense, just gone up one floor.

The work, at its core, is the same work. The window I am looking out of is higher.

—TJ