The post-search internet, briefly.

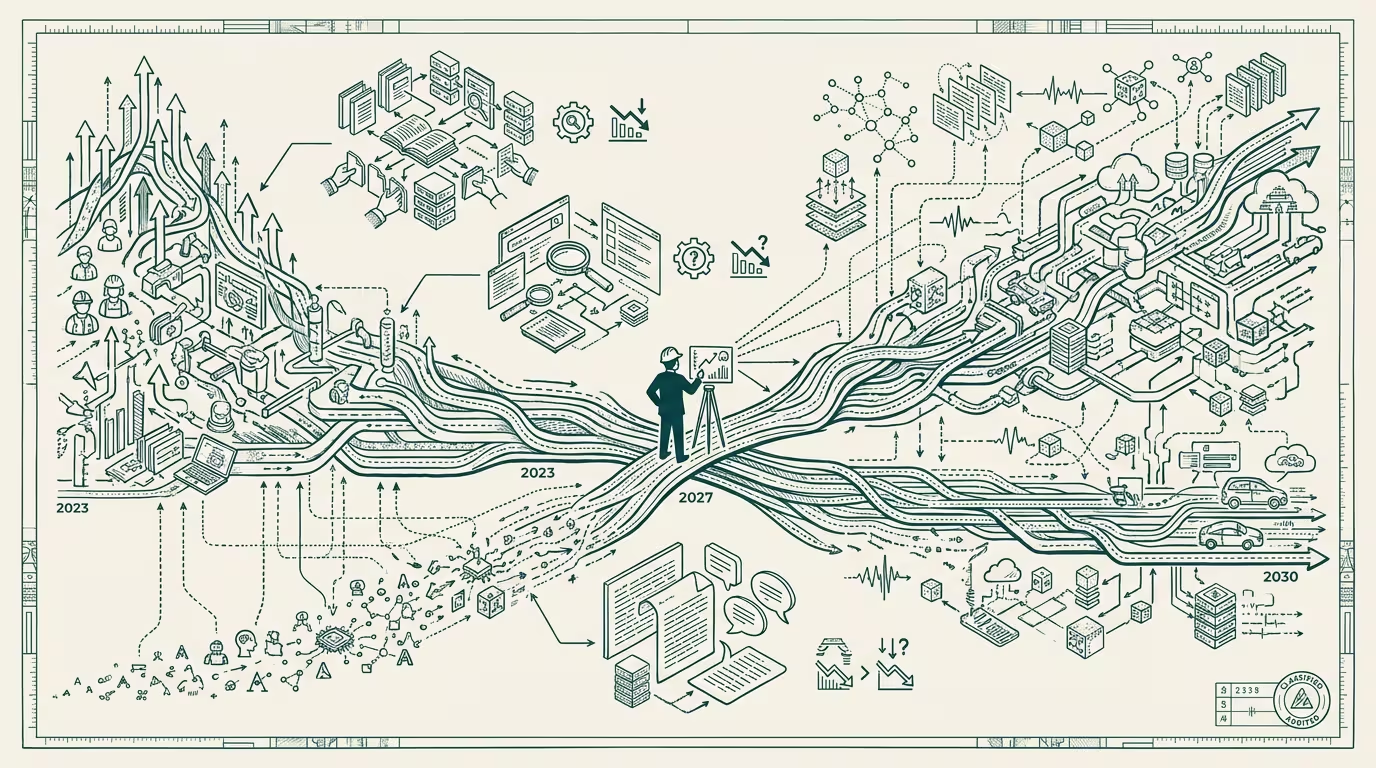

The post-search internet is closer than the consensus believes. The shape is sketchable. The shape is also, by every available measure, two to three years from being the dominant substrate for what we now call "web traffic," and the implications for content economics are large enough to be worth naming explicitly even before the substrate fully arrives.

The forecast is this. By 2027 the median visitor to a website is a software agent operating on behalf of a human principal. The agent's job is to retrieve, summarize, evaluate, and present. The human's job is to make the decision, often without looking at the source the agent retrieved from. The website is no longer the destination. The website is the substrate the agent reads on the way to producing the human-facing answer.

That substrate is not search-shaped.

Search-shaped means: indexed for keywords, ranked by inbound authority, monetized by attention. A human searches, ranks the results subjectively, clicks one, reads, decides. The advertising model rides on the human seeing the page. Twenty-five years of consumer-internet business model is built on the assumption that the visitor is the consumer.

Agent-shaped means: retrieved by structured query, evaluated against the agent's principal's criteria, summarized into a decision-ready frame. The agent does not see the advertising. The agent does not click around. The agent reads, extracts, returns. The human, on the other side of the agent, sees one screen with the answer.

Several things break.

The advertising-supported content layer breaks first because the consumer-of-record is no longer human. There is no impression to count. There is no click to attribute. There is no funnel down which the human moves from awareness to consideration to purchase, because the agent collapses the funnel into a single fetch-and-evaluate operation. The publisher who built a business on display advertising is, in 2027, looking at a CPM line that has decoupled from page-views in a way the business model cannot absorb. Some publishers will pivot to subscription. Some will pivot to direct-relationship-with-the-agent, which is a different business model again. Some will, of course, not pivot.

The SEO industry breaks. Search-engine-optimization is a craft built on optimizing for the human reader's downstream behavior, mediated by the search-engine's ranking model. Replace the human reader with the agent and the optimization target shifts from "rank for the keyword" to "be retrievable, parseable, and trustable to the agent." The agent does not care about meta descriptions. The agent does care about structured data, schema markup, the site's track record of factual accuracy, and the latency of retrieval. The SEO consultancy of 2024 is, in 2027, either evolved into "agent-readable-content consulting" or out of business.

The site-architecture-as-funnel pattern breaks. Every consumer-facing site spent fifteen years building a funnel: home page, category page, product page, checkout. The agent does not use the funnel. The agent goes directly to the data, ignores the marketing, retrieves the spec, and either initiates the transaction at the API endpoint or summarizes the choice for the human principal. The funnel was designed for a human attention pattern that the agent does not have. The sites that survive are the ones whose underlying data is queryable independent of the funnel UX.

The content-creator-as-publisher economy is the most interesting one. The independent creator publishing on Substack, on a personal site, on Medium, has been operating in a discoverability environment shaped by RSS, social-media-algorithm, search-engine-organic, newsletter-list. All four of those are human-mediated discovery. None of them are agent-mediated. The creator who publishes thoughtful original content into a 2027 internet where the median reader is an agent acting for a human principal is a creator whose content is being read more than the page-view count suggests, and getting paid less than that reading rate would imply, because the existing monetization apparatus did not anticipate the substrate change. The structural fix is direct-relationship monetization (subscription, sponsorship, direct-paid-API access for agents) that bypasses the broken display layer.

Implications for content economics are five concrete moves.

First, the platform that successfully prices agent-traffic separately from human-traffic captures the next decade of the publishing-monetization layer. The agent is willing to pay for high-quality retrievable content. The human is willing to pay for the original-experience, the personal connection, the authorial voice. Pricing them as the same thing is the legacy mistake. Pricing them as different products with different access tiers is the structural fix, and the platform that ships it first wins the category.

Second, the licensing layer for agent-access becomes a real category. By 2027 the New York Times has signed an agent-access licensing deal. So has Bloomberg. So have the major specialty publishers. The deal terms will be set in 2025 and 2026, and the terms will, of course, favor the publishers with negotiating leverage. The independent creator does not have that leverage; the licensing layer for the long-tail creator-class is going to be intermediated by some new platform whose name we do not yet know.

Third, the content-evaluation layer (which agents use to decide what to retrieve from) becomes high-leverage. Agents need a trust signal: which sources are reliable, which sources have a track record, which sources are honestly disclosing their funding and methodology. The trust-signal infrastructure is built either by the frontier labs (the agent's authoring lab decides what the agent trusts) or by an independent layer (a CDN-of-trust, structurally analogous to certificate authorities for HTTPS). The independent layer is the more durable answer; the frontier-lab version is the version that ships first.

Fourth, the human-attention-economy does not disappear. It contracts. The original-content reader, the long-form essay reader, the listener-to-the-podcast, the watcher-of-the-documentary still exists, and the willingness-to-pay for the original-experience increases as agent-mediation grows because the original becomes the scarce thing. Substack-class platforms do well in this scenario. Display-advertising-supported platforms do badly.

Fifth, and most operationally relevant, the search-engine business model is the load-bearing piece that gets repriced. Google's search business is roughly $200B/year of advertising revenue tied to the click-through pattern that the agent breaks. Some fraction of that pivots to agentic-search-as-a-service (the agent calls Google's index, Google charges per call). Some fraction is captured by the frontier-lab's own retrieval layer (the agent does not call Google because the agent's lab built its own retrieval layer). The repricing is the largest single value-transfer event in the consumer-internet's twenty-five-year history, and it is happening on a 2024-to-2028 curve.

The honest forecast: the post-search internet is what the consumer-web is becoming, the substrate-change is irreversible, the advertising-supported content layer is in the early phase of a decade-long contraction, and the platform shape that wins is the one that prices agent-access and human-access as different products with different tiers. The publishers who price them as the same product, in the legacy display-advertising model, will be repriced down to the agent-evaluable component of their offering, and the agent-evaluable component is, by structural definition, lower-margin than the human-attention component used to be.

That is the next platform shape. The shape is closer than the discourse believes. The fights about who builds the agent-readable-content layer, the licensing layer, and the content-trust-evaluation layer are starting now. The fight about whether the substrate has actually changed is the fight that, ten years from now, looks the way the "is the internet a fad" fight looked twenty years ago: the people who got it right early built the next platform-tier company. The people who held the legacy view ran a contracting business. Both outcomes were predictable from the substrate analysis. Neither felt sudden from the inside.

The post-search internet is briefly here. It is going to be here for a long time. The operators who recognize it in 2024 are the operators who run the platforms of 2030.

—TJ