The 'rich agent / poor agent' divide is coming.

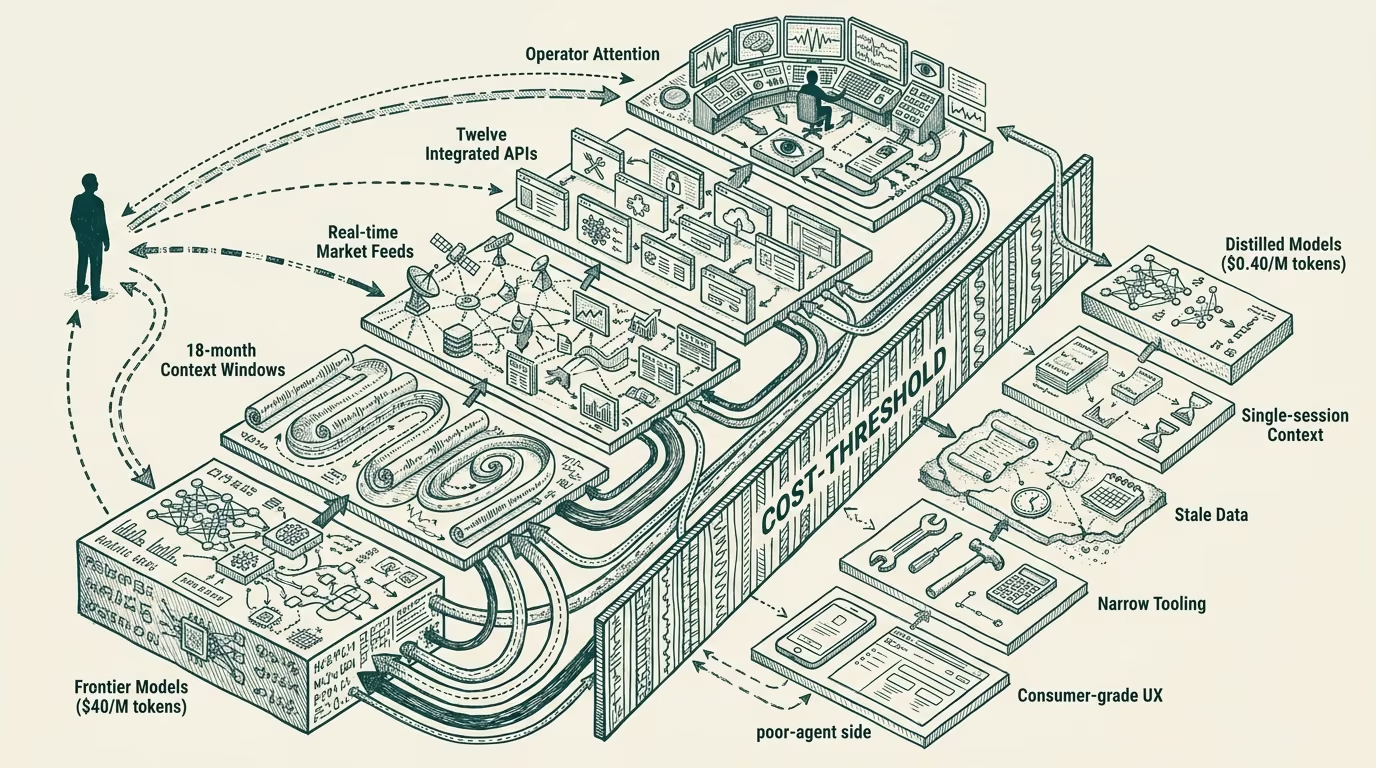

A user with a $200-a-month agent subscription opens her laptop in 2027. The agent on the other end has access to her calendar, her email, her files, real-time market data, the latest frontier-model release shipped that morning, and a context window that holds the last 18 months of her work. It runs against a model class that costs the provider roughly $40 per million tokens to serve. It can call out to twelve different tools without asking, including paid APIs that the subscription tier covers. It remembers her. It reads the room.

A user without that subscription opens her laptop the same morning. The agent on the other end runs against a distilled small model, costs roughly $0.40 per million tokens to serve, has access to a context window that maxes out at the current conversation, and will not call out to anything that costs the provider more than a fraction of a cent. It does not remember her between sessions. It does not read the room.

These are two different products. They are sold under the same word.

This piece is a futurist case study, in the carve-out sense of [ADR-0031](decisions/0031-futurist-register-carveout.md). Forward projection from a 2024 vantage about how the agent-layer market splits over the next three to four years. The forecast is conditional, and the part that holds is what to do about it.

The two tiers, named precisely

Rich agents are the ones with access to compute, context, and integration. Compute means a frontier-class model running with adequate inference budget per query. Context means real-time data, persistent memory across sessions, and a context window large enough to hold the operator's full work surface. Integration means tool-use depth, with paid-API access the subscription tier underwrites.

Poor agents are the ones without. Compute is a distilled small model, often an open-weights variant the provider can run on cheap commodity inference. Context is the current conversation only, no persistent memory, and a small enough context window that the operator has to re-introduce themselves each session. Integration is whatever the free tier can sustain at near-zero marginal cost.

The two tiers exist now, in early 2024, in nascent form. The compute-and-context gap is already visible in the price differential between consumer and enterprise tiers of the major AI vendors. By 2027 the gap is structural. By 2028 it is the load-bearing fact of the agent-layer market.

Three axes of compounding

The divide compounds across at least three named axes, and the compounding is what makes it structural rather than transient.

First, model capability. Frontier models in 2027 will sit two to three model-generations ahead of the small models distilled for the poor-agent tier. The gap is a function of training cost: a frontier model in 2027 costs ~$1B+ to train; the distilled variant that ships to consumer free tiers gets shipped one-to-two generations behind because the economics only work that way. That gap is not closing. It is widening, because the rich-agent tier funds frontier training, and the poor-agent tier consumes the leftovers a year-plus later.

Second, context provision. The rich agent has access to the operator's calendar, email, files, browser history, and the persistent memory built up across all prior interactions. The poor agent has the current conversation. The compounding effect is that the rich agent gets better at the operator's context every day, and the poor agent starts from zero every session. Over twelve months of use, the rich-agent surface accumulates value that the poor agent structurally cannot.

Third, tool integration depth. The rich agent's subscription tier covers paid-API access (a search-agent that can run twenty refined queries; a research-agent that can pull from a dozen subscription databases; a coding-agent that can run real test suites in real sandboxes). The poor agent gets the free-tier API access (one search query rate-limited to 100 calls a day; the public Wikipedia subset; the limited-throughput sandbox). The cost of an agent's tool surface is paid in cents per query. Multiply by the median user's daily query volume by 2027 and the difference between rich and poor agents on this axis alone is a structural performance gap of three to five times.

Take any one of these axes alone and you have a meaningful divide. Take all three compounding together, across two years of usage, and you have a structural stratification that does not get bridged by the user moving up a tier. The rich agent that has been learning her work surface for eighteen months produces qualitatively different output than the rich agent she would just-now subscribe to.

The user-class consequence

The structural consequence is a user-class stratification that maps onto household-tier subscription economics. The household with a rich-agent subscription gets a different relationship with its tools than the household without. The rich-agent household uses agents to negotiate medical bills, prepare for difficult conversations, run research for purchases of $5,000+, draft and review legal documents, manage their financial planning. The poor-agent household uses agents to summarize emails and draft replies.

The gap is not a productivity tier. The gap is a category-of-use tier. The rich-agent household has agents in the loop on every meaningful decision the household makes. The poor-agent household has a slightly better Google.

This is not the technological-determinism shape of the argument. The shape is closer to the broadband-access-divide of the early 2010s, where households with good broadband ran a categorically different relationship with the internet than households with dialup or capped mobile data. The technology was the same; the access tier produced two different products. Same word, two different products.

The 2010s broadband divide had clear remediation paths: public investment in infrastructure, regulatory pressure on incumbents, market entrants priced for the underserved tier. The agent-layer divide has analogues for some of these but is structurally harder because the rich-agent tier's value is partially the persistent learning that takes time to accrue. Even if the policy unlocks the access tier, the eighteen-month learning gap remains as a structural lag. A late-arriving subscriber gets a smarter starting point but spends a year catching up to where the early subscriber's agent already is.

The operator-class question

For an operator deciding which agent-layer product to build between 2025 and 2028, the question shapes up cleanly. Are you shipping to the rich-agent user, the poor-agent user, or trying to span the gap?

Shipping to the rich-agent user means a product priced at $50-200/month, optimized for context-and-integration depth, with a willingness to lose users who cannot pay. The economics work because the per-user revenue is high and the willingness-to-pay among the served cohort is real. The risk is that the rich-agent tier is a smaller market than the headline-numbers imply.

Shipping to the poor-agent user means a product priced free or near-free, optimized for what's possible at distilled-model inference cost, with the operator-class understanding that the product is genuinely worse than the rich-agent equivalent and the user-class who cannot afford the alternative is the user-class you serve. The economics work through scale and ad-tier monetization. The risk is that the product becomes structurally embarrassing relative to the rich-agent equivalent, in the way mobile-only access to the early 2010s internet became structurally embarrassing relative to broadband.

Spanning the gap is the third option and the hardest one. A product designed to deliver rich-agent-class outcomes at poor-agent-class price points requires both breakthrough efficiency on the compute side and architectural cleverness on the context side. Some candidate paths exist (heavy on-device inference, federated context-pooling, public-data-anchored agents). None of them obviously work at scale yet. By 2027 we will know which of them, if any, did.

The harder version of the operator-tier question is the user-class consequence the operator's choice produces. A product shipped to the rich-agent tier reinforces the divide. A product shipped to the poor-agent tier serves the underserved cohort but accepts the structural performance gap. A product spanning the gap is the rare both-and. Operators with the means to choose should know which side of the divide their product widens, narrows, or holds steady on.

What survives all of this

The divide is coming. The forecast is two to three years out from this 2024 vantage. The compounding axes are already visible in early-2024 product tiers. The user-class consequence will be the operating story of the late-decade agent layer. The operator question is one of the consequential product-strategy choices builders face between now and 2028.

What I want every operator reading this to take seriously is that the divide is not an accident of pricing strategy that gets corrected by a future market entrant. The compounding makes it structural. The user-class consequence makes it ethically loaded. The operator-grade choice is which side of the gap your product serves, and the cost of that choice is one part of what your product is, in the world it ships into.

Build for the side you can defend on the part that holds. The gap will be there either way.

—TJ