Three OTAs shipped concierge AI. All three failed the same way.

Through late 2023 and into 2024 the three major U.S.-and-European OTAs each shipped a concierge-class AI feature in their consumer-facing booking experience. The launches were within roughly four months of each other, the demos all carried a similar structural shape, and the features all underperformed against the launch demos in ways that have since produced quiet de-prioritization, rebuilds, or repositioning of the launched products. This essay walks the launches at the level of public observation, names the shared failure mode, and pulls the operator-class lesson for the next cohort of vendors building consumer-facing concierge-AI in the OTA category.

What the three OTAs shipped

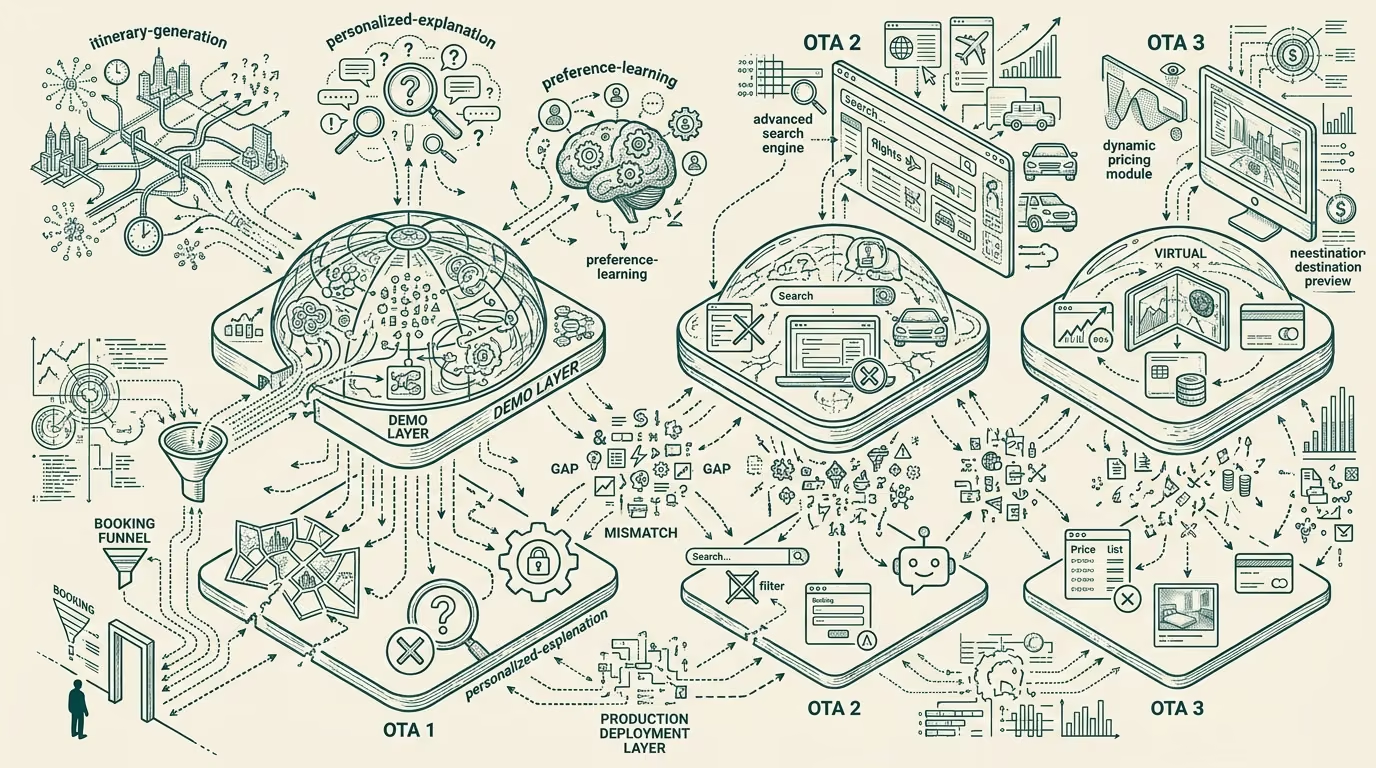

The first launch, in late 2023, was a chat-based trip-planning agent integrated into the OTA's booking funnel. The launch demo showed the agent taking a free-text trip request, asking a small set of clarifying questions, and producing an itinerary with hotel-and-flight suggestions, daily routing, and budget-aware alternatives. The demo was polished, the production deployment was thinner, and the rollout to the broader user base was rate-limited and quickly de-prioritized as the actual usage data came in.

The second launch, in early 2024, was a recommendation-and-explanation feature that overlaid the OTA's existing search results with AI-generated explanations of why a particular hotel or flight was being recommended. The launch demo showed the explanations as detailed, traveler-personalized, and fact-grounded against the property's reviews and the traveler's stated preferences. The production deployment surfaced explanations that were noticeably more generic, occasionally factually wrong about the property, and that reviewers and journalists wrote critical pieces about within weeks of the broad rollout.

The third launch, in mid-2024, was an end-to-end agentic-booking flow where the user could describe a trip in conversational text and the agent would produce a complete bookable itinerary with one-click confirmation. The launch demo showed the agent handling a complex multi-stop request with budget constraints and dietary preferences, returning a complete itinerary with hotel, flights, restaurant reservations, and ground transport. The production deployment was substantially narrower in scope, frequently failed on requests that the demo had handled cleanly, and the company rebuilt the underlying agent twice in the following six months.

The shared failure mode

The common pattern across all three launches was the gap between demo capability and production capability, with the gap concentrated in two structural areas.

The first area is what the AI vendor class calls grounding: the discipline of constraining the model's outputs to verifiable facts about the actual supply graph (the actual hotels, the actual prices, the actual availability) rather than letting the model generate plausible-sounding but unverified content. The launch demos in all three cases were grounded against curated example trips with hand-checked supply data. The production deployments were operating against the live supply graph in real time, with rate-limited access to the underlying APIs, with stale-cache-tolerance issues, and with the model sometimes generating hotel-and-restaurant names that did not exist in the supply graph or did not exist at all. The grounding gap was the largest single contributor to the production-vs-demo disappointment.

The second area is the demo-to-production scope contraction that follows from the safety-and-reliability review. The launch demo runs the full agentic capability against a curated example. The production deployment has to handle the long tail of malformed user requests, ambiguous queries, and edge cases that the demo curated away. The production deployment also has to handle the regulatory-and-consumer-protection review that constrains what the agent can autonomously commit to (booking-and-payment authorization, refund policies, accessibility requirements). The result is a production agent that is meaningfully narrower in scope than the demo agent, with the narrowing happening late in the engineering cycle and the marketing-and-launch positioning generally not catching up.

The two areas compound. The under-grounded production agent that has also been scope-contracted ends up looking, in user-facing terms, like an under-performing version of the demo. The user-facing reviews and the trade-press coverage are not generous to that gap.

Why all three did this

The structural reason all three OTAs shipped the same shape of failure is that the OTA category in 2023-2024 was operating against a competitive-pressure environment that rewarded launching the demo before the production deployment was ready. The category was reading the moves of the other OTAs (and of the chat-tools-launching-trip-planning consumer apps like ChatGPT and Gemini) as a signal that the AI feature had to ship in the booking experience or the OTA would be perceived as falling behind. The internal pressure to ship the launch was strong. The internal advocacy for delaying the launch until the production deployment matched the demo was structurally weaker than the competitive-pressure-to-ship advocacy.

Additionally, the AI vendor partners the OTAs were working with (a mix of foundation-model providers, specialty AI-startups, and internal teams) were in a phase of demonstrating capability rather than productizing it. The capability demonstrations were genuine. The path from demonstration to production was not. The OTAs that committed to the shipping schedule before the productization work was done shipped the gap that resulted.

This is not a uniquely-OTA pattern. Consumer-tech companies broadly through 2023-2024 shipped a similar shape of demo-driven AI feature with similar gaps to production. The OTA-specific version of it landed harder because the OTA's user base is comparing the AI feature against an existing booking experience that already works well, where most consumer-tech products do not have that baseline-comparison constraint.

The operator-class lesson

For the next cohort of vendors building consumer-facing concierge-AI in the travel category (or in any category where the user expectation is calibrated against an existing baseline that already works), the lesson is to invert the demo-driven launch sequence. Build the production capability first against a narrow-but-grounded scope. Ship the production capability when the production version actually works at the scope that ships. Use the demo to show what the production version does, not what the production version aspires to.

This is structurally hard inside a competitive-pressure environment that rewards the demo-first launch shape. The vendors who can hold to the production-first sequence have an advantage that compounds, because their second launch (the rebuild after the first failed launch) is the one that actually ships a working product, and the vendors that did not have the first failed launch can ship working products faster.

The three OTA failures of 2023-2024 are visible to anyone who reads the consumer-facing reviews and the trade-press coverage of the launches. The next cohort building in this category should read the failures as a structural lesson rather than as bad-luck stories. The failure mode is reproducible. The avoidance pattern is also reproducible. The structural read is to ground the production deployment, narrow the launch scope to what works, and align the marketing positioning to the production capability rather than the demo capability. That sequence ships working products. The OTA category will eventually run that sequence. The vendors who run it sooner capture the consumer-trust position the failed launches lost.

—TJ